Concurrency in Python - Quick Guide

Concurrency in Python - Introduction

In this chapter, we will understand the concept of concurrency in Python and learn about the different threads and processes.

What is Concurrency?

In simple words, concurrency is the occurrence of two or more events at the same time. Concurrency is a natural phenomenon because many events occur simultaneously at any given time.

In terms of programming, concurrency is when two tasks overlap in execution. With concurrent programming, the performance of our applications and software systems can be improved because we can concurrently deal with the requests rather than waiting for a previous one to be completed.

Historical Review of Concurrency

Following points will give us the brief historical review of concurrency −

From the concept of railroads

Concurrency is closely related with the concept of railroads. With the railroads, there was a need to handle multiple trains on the same railroad system in such a way that every train would get to its destination safely.

Concurrent computing in academia

The interest in computer science concurrency began with the research paper published by Edsger W. Dijkstra in 1965. In this paper, he identified and solved the problem of mutual exclusion, the property of concurrency control.

High-level concurrency primitives

In recent times, programmers are getting improved concurrent solutions because of the introduction of high-level concurrency primitives.

Improved concurrency with programming languages

Programming languages such as Google’s Golang, Rust and Python have made incredible developments in areas which help us get better concurrent solutions.

What is thread & multithreading?

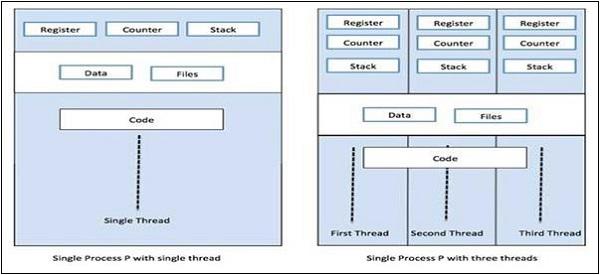

Thread is the smallest unit of execution that can be performed in an operating system. It is not itself a program but runs within a program. In other words, threads are not independent of one other. Each thread shares code section, data section, etc. with other threads. They are also known as lightweight processes.

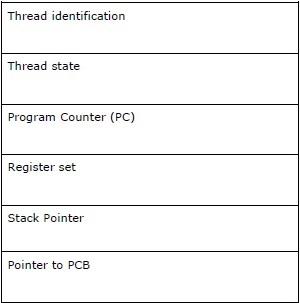

A thread consists of the following components −

Multithreading, on the other hand, is the ability of a CPU to manage the use of operating system by executing multiple threads concurrently. The main idea of multithreading is to achieve parallelism by dividing a process into multiple threads. The concept of multithreading can be understood with the help of the following example.

Example

Suppose we are running a particular process wherein we open MS Word to type content into it. One thread will be assigned to open MS Word and another thread will be required to type content in it. And now, if we want to edit the existing then another thread will be required to do the editing task and so on.

What is process & multiprocessing?

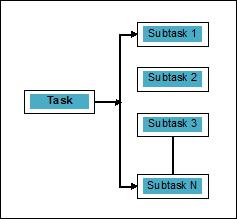

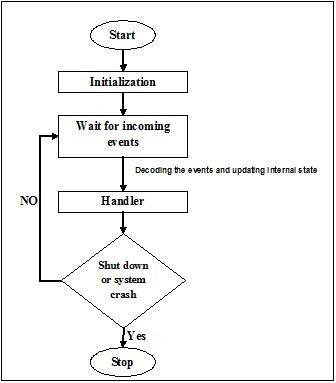

Aprocessis defined as an entity, which represents the basic unit of work to be implemented in the system. To put it in simple terms, we write our computer programs in a text file and when we execute this program, it becomes a process that performs all the tasks mentioned in the program. During the process life cycle, it passes through different stages – Start, Ready, Running, Waiting and Terminating.

Following diagram shows the different stages of a process −

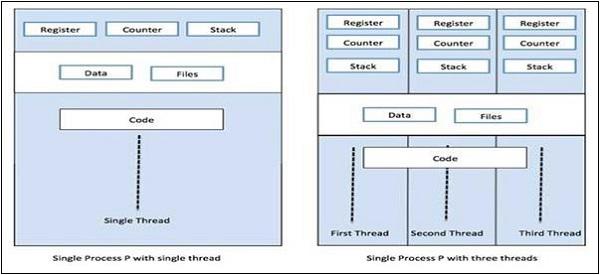

A process can have only one thread, called primary thread, or multiple threads having their own set of registers, program counter and stack. Following diagram will show us the difference −

Multiprocessing, on the other hand, is the use of two or more CPUs units within a single computer system. Our primary goal is to get the full potential from our hardware. To achieve this, we need to utilize full number of CPU cores available in our computer system. Multiprocessing is the best approach to do so.

Python is one of the most popular programming languages. Followings are some reasons that make it suitable for concurrent applications −

Syntactic sugar

Syntactic sugar is syntax within a programming language that is designed to make things easier to read or to express. It makes the language “sweeter” for human use: things can be expressed more clearly, more concisely, or in an alternative style based on preference. Python comes with Magic methods, which can be defined to act on objects. These Magic methods are used as syntactic sugar and bound to more easy-to-understand keywords.

Large Community

Python language has witnessed a massive adoption rate amongst data scientists and mathematicians, working in the field of AI, machine learning, deep learning and quantitative analysis.

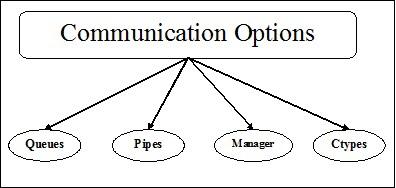

Useful APIs for concurrent programming

Python 2 and 3 have large number of APIs dedicated for parallel/concurrent programming. Most popular of them are threading, concurrent.features, multiprocessing, asyncio, gevent and greenlets, etc.

Limitations of Python in implementing concurrent applications

Python comes with a limitation for concurrent applications. This limitation is called GIL (Global Interpreter Lock) is present within Python. GIL never allows us to utilize multiple cores of CPU and hence we can say that there are no true threads in Python. We can understand the concept of GIL as follows −

GIL (Global Interpreter Lock)

It is one of the most controversial topics in the Python world. In CPython, GIL is the mutex - the mutual exclusion lock, which makes things thread safe. In other words, we can say that GIL prevents multiple threads from executing Python code in parallel. The lock can be held by only one thread at a time and if we want to execute a thread then it must acquire the lock first. The diagram shown below will help you understand the working of GIL.

However, there are some libraries and implementations in Python such as Numpy, Jpython and IronPytbhon. These libraries work without any interaction with GIL.

Concurrency vs Parallelism

Both concurrency and parallelism are used in relation to multithreaded programs but there is a lot of confusion about the similarity and difference between them. The big question in this regard: is concurrency parallelism or not? Although both the terms appear quite similar but the answer to the above question is NO, concurrency and parallelism are not same. Now, if they are not same then what is the basic difference between them?

In simple terms, concurrency deals with managing the access to shared state from different threads and on the other side, parallelism deals with utilizing multiple CPUs or its cores to improve the performance of hardware.

Concurrency in Detail

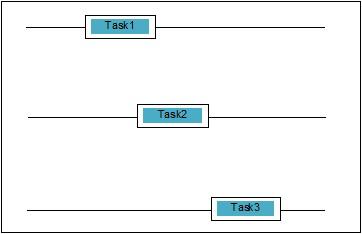

Concurrency is when two tasks overlap in execution. It could be a situation where an application is progressing on more than one task at the same time. We can understand it diagrammatically; multiple tasks are making progress at the same time, as follows −

Levels of Concurrency

In this section, we will discuss the three important levels of concurrency in terms of programming −

Low-Level Concurrency

In this level of concurrency, there is explicit use of atomic operations. We cannot use such kind of concurrency for application building, as it is very error-prone and difficult to debug. Even Python does not support such kind of concurrency.

Mid-Level Concurrency

In this concurrency, there is no use of explicit atomic operations. It uses the explicit locks. Python and other programming languages support such kind of concurrency. Mostly application programmers use this concurrency.

High-Level Concurrency

In this concurrency, neither explicit atomic operations nor explicit locks are used. Python has concurrent.futures module to support such kind of concurrency.

Properties of Concurrent Systems

For a program or concurrent system to be correct, some properties must be satisfied by it. Properties related to the termination of system are as follows −

Correctness property

The correctness property means that the program or the system must provide the desired correct answer. To keep it simple, we can say that the system must map the starting program state to final state correctly.

Safety property

The safety property means that the program or the system must remain in a “good” or “safe” state and never does anything “bad”.

Liveness property

This property means that a program or system must “make progress” and it would reach at some desirable state.

Actors of concurrent systems

This is one common property of concurrent system in which there can be multiple processes and threads, which run at the same time to make progress on their own tasks. These processes and threads are called actors of the concurrent system.

Resources of Concurrent Systems

The actors must utilize the resources such as memory, disk, printer etc. in order to perform their tasks.

Certain set of rules

Every concurrent system must possess a set of rules to define the kind of tasks to be performed by the actors and the timing for each. The tasks could be acquiring of locks, memory sharing, modifying the state, etc.

Barriers of Concurrent Systems

While implementing concurrent systems, the programmer must take into consideration the following two important issues, which can be the barriers of concurrent systems −

Sharing of data

An important issue while implementing the concurrent systems is the sharing of data among multiple threads or processes. Actually, the programmer must ensure that locks protect the shared data so that all the accesses to it are serialized and only one thread or process can access the shared data at a time. In case, when multiple threads or processes are all trying to access the same shared data then not all but at least one of them would be blocked and would remain idle. In other words, we can say that we would be able to use only one process or thread at a time when lock is in force. There can be some simple solutions to remove the above-mentioned barriers −

Data Sharing Restriction

The simplest solution is not to share any mutable data. In this case, we need not to use explicit locking and the barrier of concurrency due to mutual data would be solved.

Data Structure Assistance

Many times the concurrent processes need to access the same data at the same time. Another solution, than using of explicit locks, is to use a data structure that supports concurrent access. For example, we can use the queue module, which provides thread-safe queues. We can also use multiprocessing.JoinableQueue classes for multiprocessing-based concurrency.

Immutable Data Transfer

Sometimes, the data structure that we are using, say concurrency queue, is not suitable then we can pass the immutable data without locking it.

Mutable Data Transfer

In continuation of the above solution, suppose if it is required to pass only mutable data, rather than immutable data, then we can pass mutable data that is read only.

Sharing of I/O Resources

Another important issue in implementing concurrent systems is the use of I/O resources by threads or processes. The problem arises when one thread or process is using the I/O for such a long time and other is sitting idle. We can see such kind of barrier while working with an I/O heavy application. It can be understood with the help of an example, the requesting of pages from web browser. It is a heavy application. Here, if the rate at which the data is requested is slower than the rate at which it is consumed then we have I/O barrier in our concurrent system.

The following Python script is for requesting a web page and getting the time our network took to get the requested page −

import urllib.request

import time

ts = time.time()

req = urllib.request.urlopen('http://www.howcodex.com')

pageHtml = req.read()

te = time.time()

print("Page Fetching Time : {} Seconds".format (te-ts))

After executing the above script, we can get the page fetching time as shown below.

Output

Page Fetching Time: 1.0991398811340332 Seconds

We can see that the time to fetch the page is more than one second. Now what if we want to fetch thousands of different web pages, you can understand how much time our network would take.

What is Parallelism?

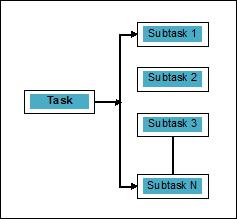

Parallelism may be defined as the art of splitting the tasks into subtasks that can be processed simultaneously. It is opposite to the concurrency, as discussed above, in which two or more events are happening at the same time. We can understand it diagrammatically; a task is broken into a number of subtasks that can be processed in parallel, as follows −

To get more idea about the distinction between concurrency and parallelism, consider the following points −

Concurrent but not parallel

An application can be concurrent but not parallel means that it processes more than one task at the same time but the tasks are not broken down into subtasks.

Parallel but not concurrent

An application can be parallel but not concurrent means that it only works on one task at a time and the tasks broken down into subtasks can be processed in parallel.

Neither parallel nor concurrent

An application can be neither parallel nor concurrent. This means that it works on only one task at a time and the task is never broken into subtasks.

Both parallel and concurrent

An application can be both parallel and concurrent means that it both works on multiple tasks at a time and the task is broken into subtasks for executing them in parallel.

Necessity of Parallelism

We can achieve parallelism by distributing the subtasks among different cores of single CPU or among multiple computers connected within a network.

Consider the following important points to understand why it is necessary to achieve parallelism −

Efficient code execution

With the help of parallelism, we can run our code efficiently. It will save our time because the same code in parts is running in parallel.

Faster than sequential computing

Sequential computing is constrained by physical and practical factors due to which it is not possible to get faster computing results. On the other hand, this issue is solved by parallel computing and gives us faster computing results than sequential computing.

Less execution time

Parallel processing reduces the execution time of program code.

If we talk about real life example of parallelism, the graphics card of our computer is the example that highlights the true power of parallel processing because it has hundreds of individual processing cores that work independently and can do the execution at the same time. Due to this reason, we are able to run high-end applications and games as well.

Understanding of the processors for implementation

We know about concurrency, parallelism and the difference between them but what about the system on which it is to be implemented. It is very necessary to have the understanding of the system, on which we are going to implement, because it gives us the benefit to take informed decision while designing the software. We have the following two kinds of processors −

Single-core processors

Single-core processors are capable of executing one thread at any given time. These processors use context switching to store all the necessary information for a thread at a specific time and then restoring the information later. The context switching mechanism helps us make progress on a number of threads within a given second and it looks as if the system is working on multiple things.

Single-core processors come with many advantages. These processors require less power and there is no complex communication protocol between multiple cores. On the other hand, the speed of single-core processors is limited and it is not suitable for larger applications.

Multi-core processors

Multi-core processors have multiple independent processing units also called cores.

Such processors do not need context switching mechanism as each core contains everything it needs to execute a sequence of stored instructions.

Fetch-Decode-Execute Cycle

The cores of multi-core processors follow a cycle for executing. This cycle is called the Fetch-Decode-Execute cycle. It involves the following steps −

Fetch

This is the first step of cycle, which involves the fetching of instructions from the program memory.

Decode

Recently fetched instructions would be converted to a series of signals that will trigger other parts of the CPU.

Execute

It is the final step in which the fetched and the decoded instructions would be executed. The result of execution will be stored in a CPU register.

One advantage over here is that the execution in multi-core processors are faster than that of single-core processors. It is suitable for larger applications. On the other hand, complex communication protocol between multiple cores is an issue. Multiple cores require more power than single-core processors.

System and Memory Architecture

There are different system and memory architecture styles that need to be considered while designing the program or concurrent system. It is very necessary because one system & memory style may be suitable for one task but may be error prone to other task.

Computer system architectures supporting concurrency

Michael Flynn in 1972 gave taxonomy for categorizing different styles of computer system architecture. This taxonomy defines four different styles as follows −

- Single instruction stream, single data stream (SISD)

- Single instruction stream, multiple data stream (SIMD)

- Multiple instruction stream, single data stream (MISD)

- Multiple instruction stream, multiple data stream (MIMD).

Single instruction stream, single data stream (SISD)

As the name suggests, such kind of systems would have one sequential incoming data stream and one single processing unit to execute the data stream. They are just like uniprocessor systems having parallel computing architecture. Following is the architecture of SISD −

Advantages of SISD

The advantages of SISD architecture are as follows −

- It requires less power.

- There is no issue of complex communication protocol between multiple cores.

Disadvantages of SISD

The disadvantages of SISD architecture are as follows −

- The speed of SISD architecture is limited just like single-core processors.

- It is not suitable for larger applications.

Single instruction stream, multiple data stream (SIMD)

As the name suggests, such kind of systems would have multiple incoming data streams and number of processing units that can act on a single instruction at any given time. They are just like multiprocessor systems having parallel computing architecture. Following is the architecture of SIMD −

The best example for SIMD is the graphics cards. These cards have hundreds of individual processing units. If we talk about computational difference between SISD and SIMD then for the adding arrays [5, 15, 20] and [15, 25, 10], SISD architecture would have to perform three different add operations. On the other hand, with the SIMD architecture, we can add then in a single add operation.

Advantages of SIMD

The advantages of SIMD architecture are as follows −

Same operation on multiple elements can be performed using one instruction only.

Throughput of the system can be increased by increasing the number of cores of the processor.

Processing speed is higher than SISD architecture.

Disadvantages of SIMD

The disadvantages of SIMD architecture are as follows −

- There is complex communication between numbers of cores of processor.

- The cost is higher than SISD architecture.

Multiple Instruction Single Data (MISD) stream

Systems with MISD stream have number of processing units performing different operations by executing different instructions on the same data set. Following is the architecture of MISD −

The representatives of MISD architecture do not yet exist commercially.

Multiple Instruction Multiple Data (MIMD) stream

In the system using MIMD architecture, each processor in a multiprocessor system can execute different sets of instructions independently on the different set of data set in parallel. It is opposite to SIMD architecture in which single operation is executed on multiple data sets. Following is the architecture of MIMD −

A normal multiprocessor uses the MIMD architecture. These architectures are basically used in a number of application areas such as computer-aided design/computer-aided manufacturing, simulation, modeling, communication switches, etc.

Memory architectures supporting concurrency

While working with the concepts like concurrency and parallelism, there is always a need to speed up the programs. One solution found by computer designers is to create shared-memory multi-computers, i.e., computers having single physical address space, which is accessed by all the cores that a processor is having. In this scenario, there can be a number of different styles of architecture but following are the three important architecture styles −

UMA (Uniform Memory Access)

In this model, all the processors share the physical memory uniformly. All the processors have equal access time to all the memory words. Each processor may have a private cache memory. The peripheral devices follow a set of rules.

When all the processors have equal access to all the peripheral devices, the system is called a symmetric multiprocessor. When only one or a few processors can access the peripheral devices, the system is called an asymmetric multiprocessor.

Non-uniform Memory Access (NUMA)

In the NUMA multiprocessor model, the access time varies with the location of the memory word. Here, the shared memory is physically distributed among all the processors, called local memories. The collection of all local memories forms a global address space which can be accessed by all the processors.

Cache Only Memory Architecture (COMA)

The COMA model is a specialized version of the NUMA model. Here, all the distributed main memories are converted to cache memories.

Concurrency in Python - Threads

In general, as we know that thread is a very thin twisted string usually of the cotton or silk fabric and used for sewing clothes and such. The same term thread is also used in the world of computer programming. Now, how do we relate the thread used for sewing clothes and the thread used for computer programming? The roles performed by the two threads is similar here. In clothes, thread hold the cloth together and on the other side, in computer programming, thread hold the computer program and allow the program to execute sequential actions or many actions at once.

Threadis the smallest unit of execution in an operating system. It is not in itself a program but runs within a program. In other words, threads are not independent of one other and share code section, data section, etc. with other threads. These threads are also known as lightweight processes.

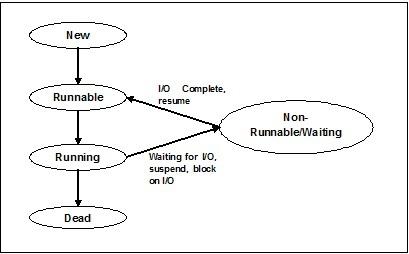

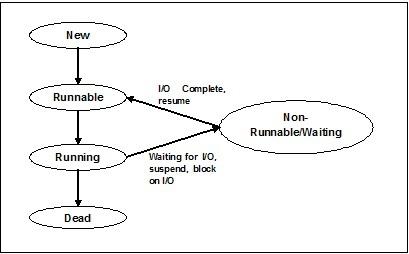

States of Thread

To understand the functionality of threads in depth, we need to learn about the lifecycle of the threads or the different thread states. Typically, a thread can exist in five distinct states. The different states are shown below −

New Thread

A new thread begins its life cycle in the new state. However, at this stage, it has not yet started and it has not been allocated any resources. We can say that it is just an instance of an object.

Runnable

As the newly born thread is started, the thread becomes runnable i.e. waiting to run. In this state, it has all the resources but still task scheduler have not scheduled it to run.

Running

In this state, the thread makes progress and executes the task, which has been chosen by task scheduler to run. Now, the thread can go to either the dead state or the non-runnable/ waiting state.

Non-running/waiting

In this state, the thread is paused because it is either waiting for the response of some I/O request or waiting for the completion of the execution of other thread.

Dead

A runnable thread enters the terminated state when it completes its task or otherwise terminates.

The following diagram shows the complete life cycle of a thread −

Types of Thread

In this section, we will see the different types of thread. The types are described below −

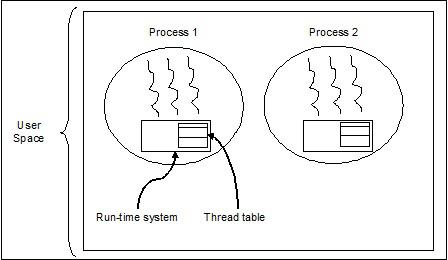

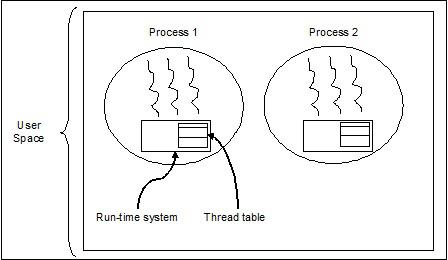

User Level Threads

These are user-managed threads.

In this case, the thread management kernel is not aware of the existence of threads. The thread library contains code for creating and destroying threads, for passing message and data between threads, for scheduling thread execution and for saving and restoring thread contexts. The application starts with a single thread.

The examples of user level threads are −

- Java threads

- POSIX threads

Advantages of User level Threads

Following are the different advantages of user level threads −

- Thread switching does not require Kernel mode privileges.

- User level thread can run on any operating system.

- Scheduling can be application specific in the user level thread.

- User level threads are fast to create and manage.

Disadvantages of User level Threads

Following are the different disadvantages of user level threads −

- In a typical operating system, most system calls are blocking.

- Multithreaded application cannot take advantage of multiprocessing.

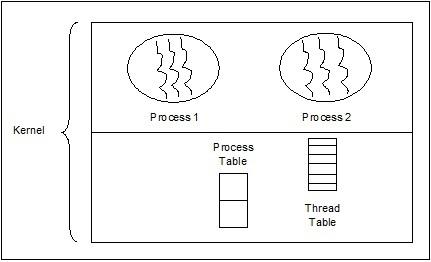

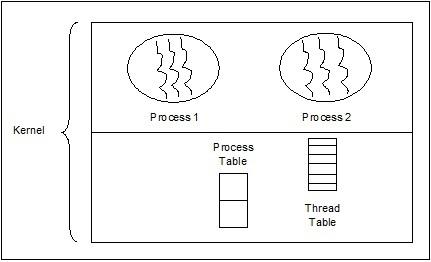

Kernel Level Threads

Operating System managed threads act on kernel, which is an operating system core.

In this case, the Kernel does thread management. There is no thread management code in the application area. Kernel threads are supported directly by the operating system. Any application can be programmed to be multithreaded. All of the threads within an application are supported within a single process.

The Kernel maintains context information for the process as a whole and for individual threads within the process. Scheduling by the Kernel is done on a thread basis. The Kernel performs thread creation, scheduling and management in Kernel space. Kernel threads are generally slower to create and manage than the user threads. The examples of kernel level threads are Windows, Solaris.

Advantages of Kernel Level Threads

Following are the different advantages of kernel level threads −

Kernel can simultaneously schedule multiple threads from the same process on multiple processes.

If one thread in a process is blocked, the Kernel can schedule another thread of the same process.

Kernel routines themselves can be multithreaded.

Disadvantages of Kernel Level Threads

Thread Control Block - TCB

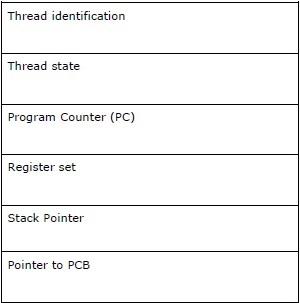

Thread Control Block (TCB) may be defined as the data structure in the kernel of operating system that mainly contains information about thread. Thread-specific information stored in TCB would highlight some important information about each process.

Consider the following points related to the threads contained in TCB −

Thread identification − It is the unique thread id (tid) assigned to every new thread.

Thread state − It contains the information related to the state (Running, Runnable, Non-Running, Dead) of the thread.

Program Counter (PC) − It points to the current program instruction of the thread.

Register set − It contains the thread’s register values assigned to them for computations.

Stack Pointer − It points to the thread’s stack in the process. It contains the local variables under thread’s scope.

Pointer to PCB − It contains the pointer to the process that created that thread.

Relation between process & thread

In multithreading, process and thread are two very closely related terms having the same goal to make computer able to do more than one thing at a time. A process can contain one or more threads but on the contrary, thread cannot contain a process. However, they both remain the two basic units of execution. A program, executing a series of instructions, initiates process and thread both.

The following table shows the comparison between process and thread −

| Process |

Thread |

| Process is heavy weight or resource intensive. |

Thread is lightweight which takes fewer resources than a process. |

| Process switching needs interaction with operating system. |

Thread switching does not need to interact with operating system. |

| In multiple processing environments, each process executes the same code but has its own memory and file resources. |

All threads can share same set of open files, child processes. |

| If one process is blocked, then no other process can execute until the first process is unblocked. |

While one thread is blocked and waiting, a second thread in the same task can run. |

| Multiple processes without using threads use more resources. |

Multiple threaded processes use fewer resources. |

| In multiple processes, each process operates independently of the others. |

One thread can read, write or change another thread's data. |

| If there would be any change in the parent process then it does not affect the child processes. |

If there would be any change in the main thread then it may affect the behavior of other threads of that process. |

| To communicate with sibling processes, processes must use inter-process communication. |

Threads can directly communicate with other threads of that process. |

Concept of Multithreading

As we have discussed earlier that Multithreading is the ability of a CPU to manage the use of operating system by executing multiple threads concurrently. The main idea of multithreading is to achieve parallelism by dividing a process into multiple threads. In a more simple way, we can say that multithreading is the way of achieving multitasking by using the concept of threads.

The concept of multithreading can be understood with the help of the following example.

Example

Suppose we are running a process. The process could be for opening MS word for writing something. In such process, one thread will be assigned to open MS word and another thread will be required to write. Now, suppose if we want to edit something then another thread will be required to do the editing task and so on.

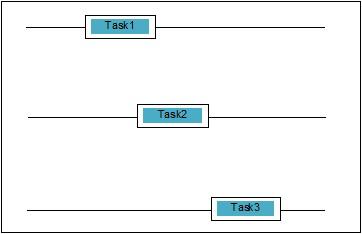

The following diagram helps us understand how multiple threads exist in memory −

We can see in the above diagram that more than one thread can exist within one process where every thread contains its own register set and local variables. Other than that, all the threads in a process share global variables.

Pros of Multithreading

Let us now see a few advantages of multithreading. The advantages are as follows −

Speed of communication − Multithreading improves the speed of computation because each core or processor handles separate threads concurrently.

Program remains responsive − It allows a program to remain responsive because one thread waits for the input and another runs a GUI at the same time.

Access to global variables − In multithreading, all the threads of a particular process can access the global variables and if there is any change in global variable then it is visible to other threads too.

Utilization of resources − Running of several threads in each program makes better use of CPU and the idle time of CPU becomes less.

Sharing of data − There is no requirement of extra space for each thread because threads within a program can share same data.

Cons of Multithreading

Let us now see a few disadvantages of multithreading. The disadvantages are as follows −

Not suitable for single processor system − Multithreading finds it difficult to achieve performance in terms of speed of computation on single processor system as compared with the performance on multi-processor system.

Issue of security − As we know that all the threads within a program share same data, hence there is always an issue of security because any unknown thread can change the data.

Increase in complexity − Multithreading can increase the complexity of the program and debugging becomes difficult.

Lead to deadlock state − Multithreading can lead the program to potential risk of attaining the deadlock state.

Synchronization required − Synchronization is required to avoid mutual exclusion. This leads to more memory and CPU utilization.

Implementation of Threads

In this chapter, we will learn how to implement threads in Python.

Python Module for Thread Implementation

Python threads are sometimes called lightweight processes because threads occupy much less memory than processes. Threads allow performing multiple tasks at once. In Python, we have the following two modules that implement threads in a program −

<_thread>module

<threading>module

The main difference between these two modules is that <_thread> module treats a thread as a function whereas, the <threading> module treats every thread as an object and implements it in an object oriented way. Moreover, the <_thread>module is effective in low level threading and has fewer capabilities than the <threading> module.

<_thread> module

In the earlier version of Python, we had the <thread> module but it has been considered as "deprecated" for quite a long time. Users have been encouraged to use the <threading> module instead. Therefore, in Python 3 the module "thread" is not available anymore. It has been renamed to "<_thread>" for backwards incompatibilities in Python3.

To generate new thread with the help of the <_thread> module, we need to call the start_new_thread method of it. The working of this method can be understood with the help of following syntax −

_thread.start_new_thread ( function, args[, kwargs] )

Here −

If we want to call function without passing an argument then we need to use an empty tuple of arguments in args.

This method call returns immediately, the child thread starts, and calls function with the passed list, if any, of args. The thread terminates as and when the function returns.

Example

Following is an example for generating new thread by using the <_thread> module. We are using the start_new_thread() method here.

import _thread

import time

def print_time( threadName, delay):

count = 0

while count < 5:

time.sleep(delay)

count += 1

print ("%s: %s" % ( threadName, time.ctime(time.time()) ))

try:

_thread.start_new_thread( print_time, ("Thread-1", 2, ) )

_thread.start_new_thread( print_time, ("Thread-2", 4, ) )

except:

print ("Error: unable to start thread")

while 1:

pass

Output

The following output will help us understand the generation of new threads bwith the help of the <_thread> module.

Thread-1: Mon Apr 23 10:03:33 2018

Thread-2: Mon Apr 23 10:03:35 2018

Thread-1: Mon Apr 23 10:03:35 2018

Thread-1: Mon Apr 23 10:03:37 2018

Thread-2: Mon Apr 23 10:03:39 2018

Thread-1: Mon Apr 23 10:03:39 2018

Thread-1: Mon Apr 23 10:03:41 2018

Thread-2: Mon Apr 23 10:03:43 2018

Thread-2: Mon Apr 23 10:03:47 2018

Thread-2: Mon Apr 23 10:03:51 2018

<threading> module

The <threading> module implements in an object oriented way and treats every thread as an object. Therefore, it provides much more powerful, high-level support for threads than the <_thread> module. This module is included with Python 2.4.

Additional methods in the <threading> module

The <threading> module comprises all the methods of the <_thread> module but it provides additional methods as well. The additional methods are as follows −

threading.activeCount() − This method returns the number of thread objects that are active

threading.currentThread() − This method returns the number of thread objects in the caller's thread control.

threading.enumerate() − This method returns a list of all thread objects that are currently active.

For implementing threading, the <threading> module has the Thread class which provides the following methods −

run() − The run() method is the entry point for a thread.

start() − The start() method starts a thread by calling the run method.

join([time]) − The join() waits for threads to terminate.

isAlive() − The isAlive() method checks whether a thread is still executing.

getName() − The getName() method returns the name of a thread.

setName() − The setName() method sets the name of a thread.

How to create threads using the <threading> module?

In this section, we will learn how to create threads using the <threading> module. Follow these steps to create a new thread using the <threading> module −

Step 1 − In this step, we need to define a new subclass of the Thread class.

Step 2 − Then for adding additional arguments, we need to override the __init__(self [,args]) method.

Step 3 − In this step, we need to override the run(self [,args]) method to implement what the thread should do when started.

Now, after creating the new Thread subclass, we can create an instance of it and then start a new thread by invoking the start(), which in turn calls the run() method.

Example

Consider this example to learn how to generate a new thread by using the <threading> module.

import threading

import time

exitFlag = 0

class myThread (threading.Thread):

def __init__(self, threadID, name, counter):

threading.Thread.__init__(self)

self.threadID = threadID

self.name = name

self.counter = counter

def run(self):

print ("Starting " + self.name)

print_time(self.name, self.counter, 5)

print ("Exiting " + self.name)

def print_time(threadName, delay, counter):

while counter:

if exitFlag:

threadName.exit()

time.sleep(delay)

print ("%s: %s" % (threadName, time.ctime(time.time())))

counter -= 1

thread1 = myThread(1, "Thread-1", 1)

thread2 = myThread(2, "Thread-2", 2)

thread1.start()

thread2.start()

thread1.join()

thread2.join()

print ("Exiting Main Thread")

Starting Thread-1

Starting Thread-2

Output

Now, consider the following output −

Thread-1: Mon Apr 23 10:52:09 2018

Thread-1: Mon Apr 23 10:52:10 2018

Thread-2: Mon Apr 23 10:52:10 2018

Thread-1: Mon Apr 23 10:52:11 2018

Thread-1: Mon Apr 23 10:52:12 2018

Thread-2: Mon Apr 23 10:52:12 2018

Thread-1: Mon Apr 23 10:52:13 2018

Exiting Thread-1

Thread-2: Mon Apr 23 10:52:14 2018

Thread-2: Mon Apr 23 10:52:16 2018

Thread-2: Mon Apr 23 10:52:18 2018

Exiting Thread-2

Exiting Main Thread

Python Program for Various Thread States

There are five thread states - new, runnable, running, waiting and dead. Among these five Of these five, we will majorly focus on three states - running, waiting and dead. A thread gets its resources in the running state, waits for the resources in the waiting state; the final release of the resource, if executing and acquired is in the dead state.

The following Python program with the help of start(), sleep() and join() methods will show how a thread entered in running, waiting and dead state respectively.

Step 1 − Import the necessary modules, <threading> and <time>

import threading

import time

Step 2 − Define a function, which will be called while creating a thread.

def thread_states():

print("Thread entered in running state")

Step 3 − We are using the sleep() method of time module to make our thread waiting for say 2 seconds.

time.sleep(2)

Step 4 − Now, we are creating a thread named T1, which takes the argument of the function defined above.

T1 = threading.Thread(target=thread_states)

Step 5 − Now, with the help of the start() function we can start our thread. It will produce the message, which has been set by us while defining the function.

T1.start()

Thread entered in running state

Step 6 − Now, at last we can kill the thread with the join() method after it finishes its execution.

T1.join()

Starting a thread in Python

In python, we can start a new thread by different ways but the easiest one among them is to define it as a single function. After defining the function, we can pass this as the target for a new threading.Thread object and so on. Execute the following Python code to understand how the function works −

import threading

import time

import random

def Thread_execution(i):

print("Execution of Thread {} started\n".format(i))

sleepTime = random.randint(1,4)

time.sleep(sleepTime)

print("Execution of Thread {} finished".format(i))

for i in range(4):

thread = threading.Thread(target=Thread_execution, args=(i,))

thread.start()

print("Active Threads:" , threading.enumerate())

Output

Execution of Thread 0 started

Active Threads:

[<_MainThread(MainThread, started 6040)>,

<HistorySavingThread(IPythonHistorySavingThread, started 5968)>,

<Thread(Thread-3576, started 3932)>]

Execution of Thread 1 started

Active Threads:

[<_MainThread(MainThread, started 6040)>,

<HistorySavingThread(IPythonHistorySavingThread, started 5968)>,

<Thread(Thread-3576, started 3932)>,

<Thread(Thread-3577, started 3080)>]

Execution of Thread 2 started

Active Threads:

[<_MainThread(MainThread, started 6040)>,

<HistorySavingThread(IPythonHistorySavingThread, started 5968)>,

<Thread(Thread-3576, started 3932)>,

<Thread(Thread-3577, started 3080)>,

<Thread(Thread-3578, started 2268)>]

Execution of Thread 3 started

Active Threads:

[<_MainThread(MainThread, started 6040)>,

<HistorySavingThread(IPythonHistorySavingThread, started 5968)>,

<Thread(Thread-3576, started 3932)>,

<Thread(Thread-3577, started 3080)>,

<Thread(Thread-3578, started 2268)>,

<Thread(Thread-3579, started 4520)>]

Execution of Thread 0 finished

Execution of Thread 1 finished

Execution of Thread 2 finished

Execution of Thread 3 finished

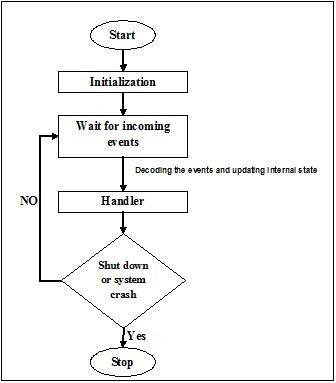

Daemon threads in Python

Before implementing the daemon threads in Python, we need to know about daemon threads and their usage. In terms of computing, daemon is a background process that handles the requests for various services such as data sending, file transfers, etc. It would be dormant if it is not required any more. The same task can be done with the help of non-daemon threads also. However, in this case, the main thread has to keep track of the non-daemon threads manually. On the other hand, if we are using daemon threads then the main thread can completely forget about this and it will be killed when main thread exits. Another important point about daemon threads is that we can opt to use them only for non-essential tasks that would not affect us if it does not complete or gets killed in between. Following is the implementation of daemon threads in python −

import threading

import time

def nondaemonThread():

print("starting my thread")

time.sleep(8)

print("ending my thread")

def daemonThread():

while True:

print("Hello")

time.sleep(2)

if __name__ == '__main__':

nondaemonThread = threading.Thread(target = nondaemonThread)

daemonThread = threading.Thread(target = daemonThread)

daemonThread.setDaemon(True)

daemonThread.start()

nondaemonThread.start()

In the above code, there are two functions namely >nondaemonThread() and >daemonThread(). The first function prints its state and sleeps after 8 seconds while the the deamonThread() function prints Hello after every 2 seconds indefinitely. We can understand the difference between nondaemon and daemon threads with the help of following output −

Hello

starting my thread

Hello

Hello

Hello

Hello

ending my thread

Hello

Hello

Hello

Hello

Hello

Synchronizing Threads

Thread synchronization may be defined as a method with the help of which we can be assured that two or more concurrent threads are not simultaneously accessing the program segment known as critical section. On the other hand, as we know that critical section is the part of the program where the shared resource is accessed. Hence we can say that synchronization is the process of making sure that two or more threads do not interface with each other by accessing the resources at the same time. The diagram below shows that four threads trying to access the critical section of a program at the same time.

To make it clearer, suppose two or more threads trying to add the object in the list at the same time. This act cannot lead to a successful end because either it will drop one or all the objects or it will completely corrupt the state of the list. Here the role of the synchronization is that only one thread at a time can access the list.

Issues in thread synchronization

We might encounter issues while implementing concurrent programming or applying synchronizing primitives. In this section, we will discuss two major issues. The issues are −

Race condition

This is one of the major issues in concurrent programming. Concurrent access to shared resources can lead to race condition. A race condition may be defined as the occurring of a condition when two or more threads can access shared data and then try to change its value at the same time. Due to this, the values of variables may be unpredictable and vary depending on the timings of context switches of the processes.

Example

Consider this example to understand the concept of race condition −

Step 1 − In this step, we need to import threading module −

import threading

Step 2 − Now, define a global variable, say x, along with its value as 0 −

x = 0

Step 3 − Now, we need to define the increment_global() function, which will do the increment by 1 in this global function x −

def increment_global():

global x

x += 1

Step 4 − In this step, we will define the taskofThread() function, which will call the increment_global() function for a specified number of times; for our example it is 50000 times −

def taskofThread():

for _ in range(50000):

increment_global()

Step 5 − Now, define the main() function in which threads t1 and t2 are created. Both will be started with the help of the start() function and wait until they finish their jobs with the help of join() function.

def main():

global x

x = 0

t1 = threading.Thread(target= taskofThread)

t2 = threading.Thread(target= taskofThread)

t1.start()

t2.start()

t1.join()

t2.join()

Step 6 − Now, we need to give the range as in for how many iterations we want to call the main() function. Here, we are calling it for 5 times.

if __name__ == "__main__":

for i in range(5):

main()

print("x = {1} after Iteration {0}".format(i,x))

In the output shown below, we can see the effect of race condition as the value of x after each iteration is expected 100000. However, there is lots of variation in the value. This is due to the concurrent access of threads to the shared global variable x.

Output

x = 100000 after Iteration 0

x = 54034 after Iteration 1

x = 80230 after Iteration 2

x = 93602 after Iteration 3

x = 93289 after Iteration 4

Dealing with race condition using locks

As we have seen the effect of race condition in the above program, we need a synchronization tool, which can deal with race condition between multiple threads. In Python, the <threading> module provides Lock class to deal with race condition. Further, the Lock class provides different methods with the help of which we can handle race condition between multiple threads. The methods are described below −

acquire() method

This method is used to acquire, i.e., blocking a lock. A lock can be blocking or non-blocking depending upon the following true or false value −

With value set to True − If the acquire() method is invoked with True, which is the default argument, then the thread execution is blocked until the lock is unlocked.

With value set to False − If the acquire() method is invoked with False, which is not the default argument, then the thread execution is not blocked until it is set to true, i.e., until it is locked.

release() method

This method is used to release a lock. Following are a few important tasks related to this method −

If a lock is locked, then the release() method would unlock it. Its job is to allow exactly one thread to proceed if more than one threads are blocked and waiting for the lock to become unlocked.

It will raise a ThreadError if lock is already unlocked.

Now, we can rewrite the above program with the lock class and its methods to avoid the race condition. We need to define the taskofThread() method with lock argument and then need to use the acquire() and release() methods for blocking and non-blocking of locks to avoid race condition.

Example

Following is example of python program to understand the concept of locks for dealing with race condition −

import threading

x = 0

def increment_global():

global x

x += 1

def taskofThread(lock):

for _ in range(50000):

lock.acquire()

increment_global()

lock.release()

def main():

global x

x = 0

lock = threading.Lock()

t1 = threading.Thread(target = taskofThread, args = (lock,))

t2 = threading.Thread(target = taskofThread, args = (lock,))

t1.start()

t2.start()

t1.join()

t2.join()

if __name__ == "__main__":

for i in range(5):

main()

print("x = {1} after Iteration {0}".format(i,x))

The following output shows that the effect of race condition is neglected; as the value of x, after each & every iteration, is now 100000, which is as per the expectation of this program.

Output

x = 100000 after Iteration 0

x = 100000 after Iteration 1

x = 100000 after Iteration 2

x = 100000 after Iteration 3

x = 100000 after Iteration 4

Deadlocks − The Dining Philosophers problem

Deadlock is a troublesome issue one can face while designing the concurrent systems. We can illustrate this issue with the help of the dining philosopher problem as follows −

Edsger Dijkstra originally introduced the dining philosopher problem, one of the famous illustrations of one of the biggest problem of concurrent system called deadlock.

In this problem, there are five famous philosophers sitting at a round table eating some food from their bowls. There are five forks that can be used by the five philosophers to eat their food. However, the philosophers decide to use two forks at the same time to eat their food.

Now, there are two main conditions for the philosophers. First, each of the philosophers can be either in eating or in thinking state and second, they must first obtain both the forks, i.e., left and right. The issue arises when each of the five philosophers manages to pick the left fork at the same time. Now they all are waiting for the right fork to be free but they will never relinquish their fork until they have eaten their food and the right fork would never be available. Hence, there would be a deadlock state at the dinner table.

Deadlock in concurrent system

Now if we see, the same issue can arise in our concurrent systems too. The forks in the above example would be the system resources and each philosopher can represent the process, which is competing to get the resources.

Solution with Python program

The solution of this problem can be found by splitting the philosophers into two types – greedy philosophers and generous philosophers. Mainly a greedy philosopher will try to pick up the left fork and wait until it is there. He will then wait for the right fork to be there, pick it up, eat and then put it down. On the other hand, a generous philosopher will try to pick up the left fork and if it is not there, he will wait and try again after some time. If they get the left fork then they will try to get the right one. If they will get the right fork too then they will eat and release both the forks. However, if they will not get the right fork then they will release the left fork.

Example

The following Python program will help us find a solution to the dining philosopher problem −

import threading

import random

import time

class DiningPhilosopher(threading.Thread):

running = True

def __init__(self, xname, Leftfork, Rightfork):

threading.Thread.__init__(self)

self.name = xname

self.Leftfork = Leftfork

self.Rightfork = Rightfork

def run(self):

while(self.running):

time.sleep( random.uniform(3,13))

print ('%s is hungry.' % self.name)

self.dine()

def dine(self):

fork1, fork2 = self.Leftfork, self.Rightfork

while self.running:

fork1.acquire(True)

locked = fork2.acquire(False)

if locked: break

fork1.release()

print ('%s swaps forks' % self.name)

fork1, fork2 = fork2, fork1

else:

return

self.dining()

fork2.release()

fork1.release()

def dining(self):

print ('%s starts eating '% self.name)

time.sleep(random.uniform(1,10))

print ('%s finishes eating and now thinking.' % self.name)

def Dining_Philosophers():

forks = [threading.Lock() for n in range(5)]

philosopherNames = ('1st','2nd','3rd','4th', '5th')

philosophers= [DiningPhilosopher(philosopherNames[i], forks[i%5], forks[(i+1)%5]) \

for i in range(5)]

random.seed()

DiningPhilosopher.running = True

for p in philosophers: p.start()

time.sleep(30)

DiningPhilosopher.running = False

print (" It is finishing.")

Dining_Philosophers()

The above program uses the concept of greedy and generous philosophers. The program has also used the acquire() and release() methods of the Lock class of the <threading> module. We can see the solution in the following output −

Output

4th is hungry.

4th starts eating

1st is hungry.

1st starts eating

2nd is hungry.

5th is hungry.

3rd is hungry.

1st finishes eating and now thinking.3rd swaps forks

2nd starts eating

4th finishes eating and now thinking.

3rd swaps forks5th starts eating

5th finishes eating and now thinking.

4th is hungry.

4th starts eating

2nd finishes eating and now thinking.

3rd swaps forks

1st is hungry.

1st starts eating

4th finishes eating and now thinking.

3rd starts eating

5th is hungry.

5th swaps forks

1st finishes eating and now thinking.

5th starts eating

2nd is hungry.

2nd swaps forks

4th is hungry.

5th finishes eating and now thinking.

3rd finishes eating and now thinking.

2nd starts eating 4th starts eating

It is finishing.

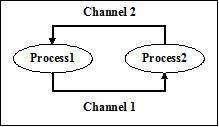

Threads Intercommunication

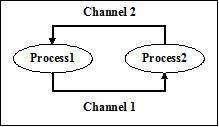

In real life, if a team of people is working on a common task then there should be communication between them for finishing the task properly. The same analogy is applicable to threads also. In programming, to reduce the ideal time of the processor we create multiple threads and assign different sub tasks to every thread. Hence, there must be a communication facility and they should interact with each other to finish the job in a synchronized manner.

Consider the following important points related to thread intercommunication −

No performance gain − If we cannot achieve proper communication between threads and processes then the performance gains from concurrency and parallelism is of no use.

Accomplish task properly − Without proper intercommunication mechanism between threads, the assigned task cannot be completed properly.

More efficient than inter-process communication − Inter-thread communication is more efficient and easy to use than inter-process communication because all threads within a process share same address space and they need not use shared memory.

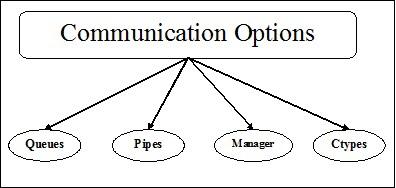

Python data structures for thread-safe communication

Multithreaded code comes up with a problem of passing information from one thread to another thread. The standard communication primitives do not solve this issue. Hence, we need to implement our own composite object in order to share objects between threads to make the communication thread-safe. Following are a few data structures, which provide thread-safe communication after making some changes in them −

Sets

For using set data structure in a thread-safe manner, we need to extend the set class to implement our own locking mechanism.

Example

Here is a Python example of extending the class −

class extend_class(set):

def __init__(self, *args, **kwargs):

self._lock = Lock()

super(extend_class, self).__init__(*args, **kwargs)

def add(self, elem):

self._lock.acquire()

try:

super(extend_class, self).add(elem)

finally:

self._lock.release()

def delete(self, elem):

self._lock.acquire()

try:

super(extend_class, self).delete(elem)

finally:

self._lock.release()

In the above example, a class object named extend_class has been defined which is further inherited from the Python set class. A lock object is created within the constructor of this class. Now, there are two functions - add() and delete(). These functions are defined and are thread-safe. They both rely on the super class functionality with one key exception.

Decorator

This is another key method for thread-safe communication is the use of decorators.

Example

Consider a Python example that shows how to use decorators &mminus;

def lock_decorator(method):

def new_deco_method(self, *args, **kwargs):

with self._lock:

return method(self, *args, **kwargs)

return new_deco_method

class Decorator_class(set):

def __init__(self, *args, **kwargs):

self._lock = Lock()

super(Decorator_class, self).__init__(*args, **kwargs)

@lock_decorator

def add(self, *args, **kwargs):

return super(Decorator_class, self).add(elem)

@lock_decorator

def delete(self, *args, **kwargs):

return super(Decorator_class, self).delete(elem)

In the above example, a decorator method named lock_decorator has been defined which is further inherited from the Python method class. Then a lock object is created within the constructor of this class. Now, there are two functions - add() and delete(). These functions are defined and are thread-safe. They both rely on super class functionality with one key exception.

Lists

The list data structure is thread-safe, quick as well as easy structure for temporary, in-memory storage. In Cpython, the GIL protects against concurrent access to them. As we came to know that lists are thread-safe but what about the data lying in them. Actually, the list’s data is not protected. For example, L.append(x) is not guarantee to return the expected result if another thread is trying to do the same thing. This is because, although append() is an atomic operation and thread-safe but the other thread is trying to modify the list’s data in concurrent fashion hence we can see the side effects of race conditions on the output.

To resolve this kind of issue and safely modify the data, we must implement a proper locking mechanism, which further ensures that multiple threads cannot potentially run into race conditions. To implement proper locking mechanism, we can extend the class as we did in the previous examples.

Some other atomic operations on lists are as follows −

L.append(x)

L1.extend(L2)

x = L[i]

x = L.pop()

L1[i:j] = L2

L.sort()

x = y

x.field = y

D[x] = y

D1.update(D2)

D.keys()

Here −

- L,L1,L2 all are lists

- D,D1,D2 are dicts

- x,y are objects

- i, j are ints

Queues

If the list’s data is not protected, we might have to face the consequences. We may get or delete wrong data item, of race conditions. That is why it is recommended to use the queue data structure. A real-world example of queue can be a single-lane one-way road, where the vehicle enters first, exits first. More real-world examples can be seen of the queues at the ticket windows and bus-stops.

Queues are by default, thread-safe data structure and we need not worry about implementing complex locking mechanism. Python provides us the module to use different types of queues in our application.

Types of Queues

In this section, we will earn about the different types of queues. Python provides three options of queues to use from the <queue> module −

- Normal Queues (FIFO, First in First out)

- LIFO, Last in First Out

- Priority

We will learn about the different queues in the subsequent sections.

Normal Queues (FIFO, First in First out)

It is most commonly used queue implementations offered by Python. In this queuing mechanism whosoever will come first, will get the service first. FIFO is also called normal queues. FIFO queues can be represented as follows −

Python Implementation of FIFO Queue

In python, FIFO queue can be implemented with single thread as well as multithreads.

FIFO queue with single thread

For implementing FIFO queue with single thread, the Queue class will implement a basic first-in, first-out container. Elements will be added to one “end” of the sequence using put(), and removed from the other end using get().

Example

Following is a Python program for implementation of FIFO queue with single thread −

import queue

q = queue.Queue()

for i in range(8):

q.put("item-" + str(i))

while not q.empty():

print (q.get(), end = " ")

Output

item-0 item-1 item-2 item-3 item-4 item-5 item-6 item-7

The output shows that above program uses a single thread to illustrate that the elements are removed from the queue in the same order they are inserted.

FIFO queue with multiple threads

For implementing FIFO with multiple threads, we need to define the myqueue() function, which is extended from the queue module. The working of get() and put() methods are same as discussed above while implementing FIFO queue with single thread. Then to make it multithreaded, we need to declare and instantiate the threads. These threads will consume the queue in FIFO manner.

Example

Following is a Python program for implementation of FIFO queue with multiple threads

import threading

import queue

import random

import time

def myqueue(queue):

while not queue.empty():

item = queue.get()

if item is None:

break

print("{} removed {} from the queue".format(threading.current_thread(), item))

queue.task_done()

time.sleep(2)

q = queue.Queue()

for i in range(5):

q.put(i)

threads = []

for i in range(4):

thread = threading.Thread(target=myqueue, args=(q,))

thread.start()

threads.append(thread)

for thread in threads:

thread.join()

Output

<Thread(Thread-3654, started 5044)> removed 0 from the queue

<Thread(Thread-3655, started 3144)> removed 1 from the queue

<Thread(Thread-3656, started 6996)> removed 2 from the queue

<Thread(Thread-3657, started 2672)> removed 3 from the queue

<Thread(Thread-3654, started 5044)> removed 4 from the queue

LIFO, Last in First Out queue

This queue uses totally opposite analogy than FIFO(First in First Out) queues. In this queuing mechanism, the one who comes last, will get the service first. This is similar to implement stack data structure. LIFO queues prove useful while implementing Depth-first search like algorithms of artificial intelligence.

Python implementation of LIFO queue

In python, LIFO queue can be implemented with single thread as well as multithreads.

LIFO queue with single thread

For implementing LIFO queue with single thread, the Queue class will implement a basic last-in, first-out container by using the structure Queue.LifoQueue. Now, on calling put(), the elements are added in the head of the container and removed from the head also on using get().

Example

Following is a Python program for implementation of the LIFO queue with single thread −

import queue

q = queue.LifoQueue()

for i in range(8):

q.put("item-" + str(i))

while not q.empty():

print (q.get(), end=" ")

Output:

item-7 item-6 item-5 item-4 item-3 item-2 item-1 item-0

The output shows that the above program uses a single thread to illustrate that elements are removed from the queue in the opposite order they are inserted.

LIFO queue with multiple threads

The implementation is similar as we have done the implementation of FIFO queues with multiple threads. The only difference is that we need to use the Queue class that will implement a basic last-in, first-out container by using the structure Queue.LifoQueue.

Example

Following is a Python program for implementation of LIFO queue with multiple threads −

import threading

import queue

import random

import time

def myqueue(queue):

while not queue.empty():

item = queue.get()

if item is None:

break

print("{} removed {} from the queue".format(threading.current_thread(), item))

queue.task_done()

time.sleep(2)

q = queue.LifoQueue()

for i in range(5):

q.put(i)

threads = []

for i in range(4):

thread = threading.Thread(target=myqueue, args=(q,))

thread.start()

threads.append(thread)

for thread in threads:

thread.join()

Output

<Thread(Thread-3882, started 4928)> removed 4 from the queue

<Thread(Thread-3883, started 4364)> removed 3 from the queue

<Thread(Thread-3884, started 6908)> removed 2 from the queue

<Thread(Thread-3885, started 3584)> removed 1 from the queue

<Thread(Thread-3882, started 4928)> removed 0 from the queue

Priority queue

In FIFO and LIFO queues, the order of items are related to the order of insertion. However, there are many cases when the priority is more important than the order of insertion. Let us consider a real world example. Suppose the security at the airport is checking people of different categories. People of the VVIP, airline staff, custom officer, categories may be checked on priority instead of being checked on the basis of arrival like it is for the commoners.

Another important aspect that needs to be considered for priority queue is how to develop a task scheduler. One common design is to serve the most agent task on priority basis in the queue. This data structure can be used to pick up the items from the queue based on their priority value.

Python Implementation of Priority Queue

In python, priority queue can be implemented with single thread as well as multithreads.

Priority queue with single thread

For implementing priority queue with single thread, the Queue class will implement a task on priority container by using the structure Queue.PriorityQueue. Now, on calling put(), the elements are added with a value where the lowest value will have the highest priority and hence retrieved first by using get().

Example

Consider the following Python program for implementation of Priority queue with single thread −

import queue as Q

p_queue = Q.PriorityQueue()

p_queue.put((2, 'Urgent'))

p_queue.put((1, 'Most Urgent'))

p_queue.put((10, 'Nothing important'))

prio_queue.put((5, 'Important'))

while not p_queue.empty():

item = p_queue.get()

print('%s - %s' % item)

Output

1 – Most Urgent

2 - Urgent

5 - Important

10 – Nothing important

In the above output, we can see that the queue has stored the items based on priority – less value is having high priority.

Priority queue with multi threads

The implementation is similar to the implementation of FIFO and LIFO queues with multiple threads. The only difference is that we need to use the Queue class for initializing the priority by using the structure Queue.PriorityQueue. Another difference is with the way the queue would be generated. In the example given below, it will be generated with two identical data sets.

Example

The following Python program helps in the implementation of priority queue with multiple threads −

import threading

import queue

import random

import time

def myqueue(queue):

while not queue.empty():

item = queue.get()

if item is None:

break

print("{} removed {} from the queue".format(threading.current_thread(), item))

queue.task_done()

time.sleep(1)

q = queue.PriorityQueue()

for i in range(5):

q.put(i,1)

for i in range(5):

q.put(i,1)

threads = []

for i in range(2):

thread = threading.Thread(target=myqueue, args=(q,))

thread.start()

threads.append(thread)

for thread in threads:

thread.join()

Output

<Thread(Thread-4939, started 2420)> removed 0 from the queue

<Thread(Thread-4940, started 3284)> removed 0 from the queue

<Thread(Thread-4939, started 2420)> removed 1 from the queue

<Thread(Thread-4940, started 3284)> removed 1 from the queue

<Thread(Thread-4939, started 2420)> removed 2 from the queue

<Thread(Thread-4940, started 3284)> removed 2 from the queue

<Thread(Thread-4939, started 2420)> removed 3 from the queue

<Thread(Thread-4940, started 3284)> removed 3 from the queue

<Thread(Thread-4939, started 2420)> removed 4 from the queue

<Thread(Thread-4940, started 3284)> removed 4 from the queue

Testing Thread Applications

In this chapter, we will learn about testing of thread applications. We will also learn the importance of testing.

Why to Test?

Before we dive into the discussion about the importance of testing, we need to know what is testing. In general terms, testing is a technique of finding out how well something is working. On the other hand, specifically if we talk about computer programs or software then testing is the technique of accessing the functionality of a software program.

In this section, we will discuss the importance of software testing. In software development, there must be double-checking before the releasing of software to the client. That is why it is very important to test the software by experienced testing team. Consider the following points to understand the importance of software testing −

Improvement of software quality

Certainly, no company wants to deliver low quality software and no client wants to buy low quality software. Testing improves the quality of software by finding and fixing the bugs in that.

Satisfaction of customers

The most important part of any business is the satisfaction of their customers. By providing bug free and good quality software, the companies can achieve customer satisfaction.

Lessen the impact of new features

Suppose we have made a software system of 10000 lines and we need to add a new feature then the development team would have the concern about the impact of this new feature on whole software. Here, also, testing plays a vital role because if the testing team has made a good suite of tests then it can save us from any potential catastrophic breaks.

User experience

Another most important part of any business is the experience of the users of that product. Only testing can assure that the end user finds it simple and easy to use the product.

Cutting down the expenses

Testing can cut down the total cost of software by finding and fixing the bugs in testing phase of its development rather than fixing it after delivery. If there is a major bug after the delivery of the software then it would increase its tangible cost say in terms of expenses and intangible cost say in terms of customer dissatisfaction, company’s negative reputation etc.

What to Test?

It is always recommended to have appropriate knowledge of what is to be tested. In this section, we will first understand be the prime motive of tester while testing any software. Code coverage, i.e., how many lines of code our test suite hits, while testing, should be avoided. It is because, while testing, focusing only on the number of lines of codes adds no real value to our system. There may remain some bugs, which reflect later at a later stage even after deployment.

Consider the following important points related to what to test −

We need to focus on testing the functionality of the code rather than the code coverage.

We need to test the most important parts of the code first and then move towards the less important parts of the code. It will definitely save time.

The tester must have multitude different tests that can push the software up to its limits.

Approaches for testing concurrent software programs

Due to the capability of utilizing the true capability of multi-core architecture, concurrent software systems are replacing sequential systems. In recent times, concurrent system programs are being used in everything from mobile phones to washing machines, from cars to airplanes, etc. We need to be more careful about testing the concurrent software programs because if we have added multiple threads to single thread application having already a bug, then we would end up with multiple bugs.

Testing techniques for concurrent software programs are extensively focusing on selecting interleaving that expose potentially harmful patterns like race conditions, deadlocks and violation of atomicity. Following are two approaches for testing concurrent software programs −

Systematic exploration

This approach aims to explore the space of the interleavings as broadly as possible. Such approaches can adopt a brute-force technique and others adopt partial order reduction technique or heuristic technique to explore the space of interleavings.

Property-driven

Property-driven approaches rely on the observation that concurrency faults are more likely to occur under interleavings that expose specific properties such as suspicious memory access pattern. Different property-driven approaches target different faults like race conditions, deadlocks and violation of atomicity, which further depends on one or other specific properties.

Testing Strategies

Test Strategy is also known as test approach. The strategy defines how testing would be carried out. Test approach has two techniques −

Proactive

An approach in which the test design process is initiated as early as possible in order to find and fix the defects before the build is created.

Reactive

An approach in which the testing does not start until the completion of the development process.

Before applying any test strategy or approach on python program, we must have a basic idea about the kind of errors a software program may have. The errors are as follows −

Syntactical errors

During program development, there can be many small errors. The errors are mostly due to typing mistakes. For example, missing colon or a wrong spelling of a keyword, etc. Such errors are due to the mistake in program syntax and not in logic. Hence, these errors are called syntactical errors.

Semantic errors

The semantic errors are also called logical errors. If there is a logical or semantic error in software program then the statement will compile and run correctly but it will not give the desired output because the logic is not correct.

Unit Testing

This is one of the most used testing strategies for testing python programs. This strategy is used for testing units or components of the code. By units or components, we mean classes or functions of the code. Unit testing simplifies the testing of large programming systems by testing “small” units. With the help of the above concept, unit testing may be defined as a method where individual units of source code are tested to determine if they return the desired output.

In our subsequent sections, we will learn about the different Python modules for unit testing.

unittest module

The very first module for unit testing is the unittest module. It is inspired by JUnit and by default included in Python3.6. It supports test automation, sharing of setup and shutdown code for tests, aggregation of tests into collections, and independence of the tests from the reporting framework.

Following are a few important concepts supported by the unittest module

Text fixture

It is used to set up a test so that it can be run before starting the test and tear down after the finish of test. It may involve creation of temporary database, directories, etc. needed before starting the test.

Test case

The test case checks whether a required response is coming from the specific set of inputs or not. The unittest module includes a base class named TestCase which can be used to create new test cases. It includes two by default methods −

setUp() − a hook method for setting up the test fixture before exercising it. This is called before calling the implemented test methods.

tearDown( − a hook method for deconstructing the class fixture after running all tests in the class.

Test suite

It is a collection of test suites, test cases or both.

Test runner

It controls the running of the test cases or suits and provides the outcome to the user. It may use GUI or simple text interface for providing the outcome.

Example