DIP - Quick Guide

Digital Image Processing Introduction

Introduction

Signal processing is a discipline in electrical engineering and in mathematics that deals with analysis and processing of analog and digital signals , and deals with storing , filtering , and other operations on signals. These signals include transmission signals , sound or voice signals , image signals , and other signals e.t.c.

Out of all these signals , the field that deals with the type of signals for which the input is an image and the output is also an image is done in image processing. As it name suggests, it deals with the processing on images.

It can be further divided into analog image processing and digital image processing.

Analog image processing

Analog image processing is done on analog signals. It includes processing on two dimensional analog signals. In this type of processing, the images are manipulated by electrical means by varying the electrical signal. The common example include is the television image.

Digital image processing has dominated over analog image processing with the passage of time due its wider range of applications.

Digital image processing

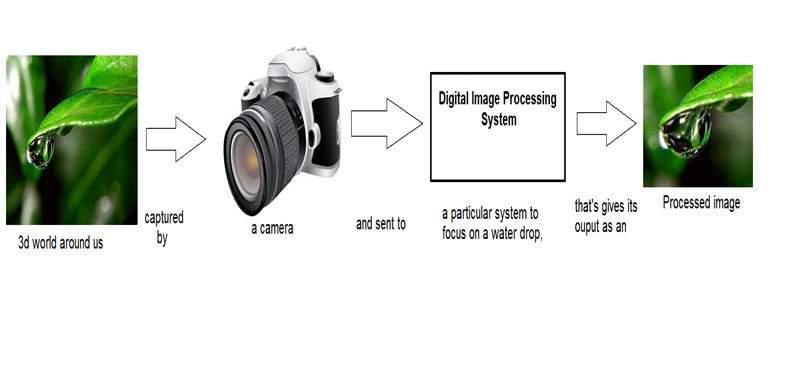

The digital image processing deals with developing a digital system that performs operations on an digital image.

What is an Image

An image is nothing more than a two dimensional signal. It is defined by the mathematical function f(x,y) where x and y are the two co-ordinates horizontally and vertically.

The value of f(x,y) at any point is gives the pixel value at that point of an image.

The above figure is an example of digital image that you are now viewing on your computer screen. But actually , this image is nothing but a two dimensional array of numbers ranging between 0 and 255.

| 128 | 30 | 123 |

| 232 | 123 | 321 |

| 123 | 77 | 89 |

| 80 | 255 | 255 |

Each number represents the value of the function f(x,y) at any point. In this case the value 128 , 230 ,123 each represents an individual pixel value. The dimensions of the picture is actually the dimensions of this two dimensional array.

Relationship between a digital image and a signal

If the image is a two dimensional array then what does it have to do with a signal?

In order to understand that , We need to first understand what is a signal?

Signal

In physical world, any quantity measurable through time over space or any higher dimension can be taken as a signal. A signal is a mathematical function, and it conveys some information.

A signal can be one dimensional or two dimensional or higher dimensional signal.

One dimensional signal is a signal that is measured over time. The common example is a voice signal.

The two dimensional signals are those that are measured over some other physical quantities. The example of two dimensional signal is a digital image.

We will look in more detail in the next tutorial of how a one dimensional or two dimensional signals and higher signals are formed and interpreted.

Relationship

Since anything that conveys information or broadcast a message in physical world between two observers is a signal. That includes speech or (human voice) or an image as a signal. Since when we speak , our voice is converted to a sound wave/signal and transformed with respect to the time to person we are speaking to.

Not only this , but the way a digital camera works, as while acquiring an image from a digital camera involves transfer of a signal from one part of the system to the other.

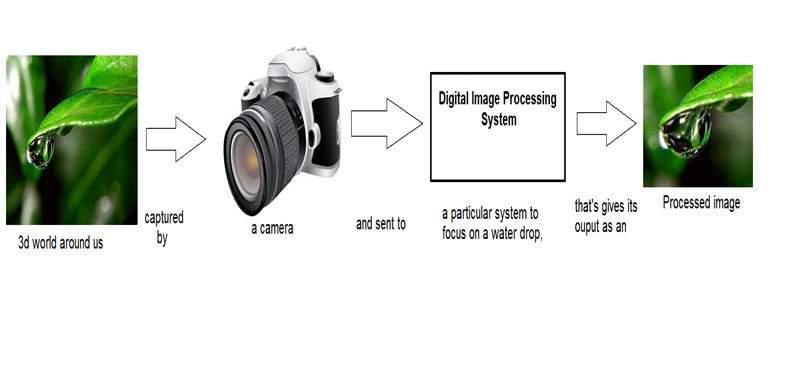

How a digital image is formed

Since capturing an image from a camera is a physical process. The sunlight is used as a source of energy. A sensor array is used for the acquisition of the image. So when the sunlight falls upon the object, then the amount of light reflected by that object is sensed by the sensors, and a continuous voltage signal is generated by the amount of sensed data. In order to create a digital image , we need to convert this data into a digital form. This involves sampling and quantization. (They are discussed later on). The result of sampling and quantization results in an two dimensional array or matrix of numbers which are nothing but a digital image.

Overlapping fields

Machine/Computer vision

Machine vision or computer vision deals with developing a system in which the input is an image and the output is some information. For example: Developing a system that scans human face and opens any kind of lock. This system would look something like this.

Computer graphics

Computer graphics deals with the formation of images from object models, rather then the image is captured by some device. For example: Object rendering. Generating an image from an object model. Such a system would look something like this.

Artificial intelligence

Artificial intelligence is more or less the study of putting human intelligence into machines. Artificial intelligence has many applications in image processing. For example: developing computer aided diagnosis systems that help doctors in interpreting images of X-ray , MRI e.t.c and then highlighting conspicuous section to be examined by the doctor.

Signal processing

Signal processing is an umbrella and image processing lies under it. The amount of light reflected by an object in the physical world (3d world) is pass through the lens of the camera and it becomes a 2d signal and hence result in image formation. This image is then digitized using methods of signal processing and then this digital image is manipulated in digital image processing.

Signals and Systems Introduction

This tutorial covers the basics of signals and system necessary for understanding the concepts of digital image processing. Before going into the detail concepts , lets first define the simple terms.

Signals

In electrical engineering, the fundamental quantity of representing some information is called a signal. It doesnot matter what the information is i-e: Analog or digital information. In mathematics, a signal is a function that conveys some information. In fact any quantity measurable through time over space or any higher dimension can be taken as a signal. A signal could be of any dimension and could be of any form.

Analog signals

A signal could be an analog quantity that means it is defined with respect to the time. It is a continuous signal. These signals are defined over continuous independent variables. They are difficult to analyze, as they carry a huge number of values. They are very much accurate due to a large sample of values. In order to store these signals , you require an infinite memory because it can achieve infinite values on a real line. Analog signals are denoted by sin waves.

For example:

Human voice

Human voice is an example of analog signals. When you speak , the voice that is produced travel through air in the form of pressure waves and thus belongs to a mathematical function, having independent variables of space and time and a value corresponding to air pressure.

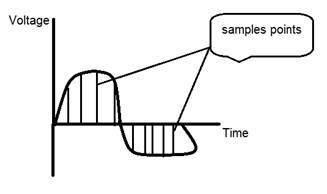

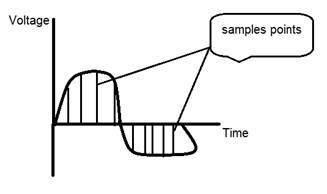

Another example is of sin wave which is shown in the figure below.

Y = sin(x) where x is indepedent

Digital signals

As compared to analog signals, digital signals are very easy to analyze. They are discontinuous signals. They are the appropriation of analog signals.

The word digital stands for discrete values and hence it means that they use specific values to represent any information. In digital signal , only two values are used to represent something i-e: 1 and 0 (binary values). Digital signals are less accurate then analog signals because they are the discrete samples of an analog signal taken over some period of time. However digital signals are not subject to noise. So they last long and are easy to interpret. Digital signals are denoted by square waves.

For example:

Computer keyboard

Whenever a key is pressed from the keyboard , the appropriate electrical signal is sent to keyboard controller containing the ASCII value that particular key. For example the electrical signal that is generated when keyboard key a is pressed, carry information of digit 97 in the form of 0 and 1, which is the ASCII value of character a.

Difference between analog and digital signals

| Comparison element | Analog signal | Digital signal |

|---|

| Analysis | Difficult | Possible to analyze |

| Representation | Continuous | Discontinuous |

| Accuracy | More accurate | Less accurate |

| Storage | Infinite memory | Easily stored |

| Subject to Noise | Yes | No |

| Recording Technique | Original signal is preserved | Samples of the signal are taken and preserved |

| Examples | Human voice , Thermometer , Analog phones e.t.c | Computers , Digital Phones , Digital pens , e.t.c |

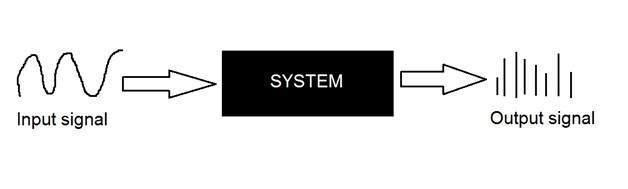

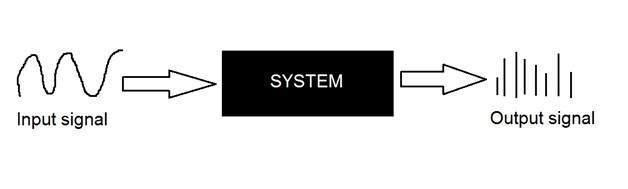

Systems

A system is a defined by the type of input and output it deals with. Since we are dealing with signals , so in our case , our system would be a mathematical model , a piece of code/software , or a physical device , or a black box whose input is a signal and it performs some processing on that signal , and the output is a signal. The input is known as excitation and the output is known as response.

In the above figure a system has been shown whose input and output both are signals but the input is an analog signal. And the output is an digital signal. It means our system is actually a conversion system that converts analog signals to digital signals.

Lets have a look at the inside of this black box system

Conversion of analog to digital signals

Since there are lot of concepts related to this analog to digital conversion and vice-versa. We will only discuss those which are related to digital image processing.

There are two main concepts that are involved in the coversion.

Sampling

Sampling as its name suggests can be defined as take samples. Take samples of a digital signal over x axis. Sampling is done on an independent variable. In case of this mathematical equation:

Sampling is done on the x variable. We can also say that the conversion of x axis (infinite values) to digital is done under sampling.

Sampling is further divide into up sampling and down sampling. If the range of values on x-axis are less then we will increase the sample of values. This is known as up sampling and its vice versa is known as down sampling

Quantization

Quantization as its name suggest can be defined as dividing into quanta (partitions). Quantization is done on dependent variable. It is opposite to sampling.

In case of this mathematical equation y = sin(x)

Quantization is done on the Y variable. It is done on the y axis. The conversion of y axis infinite values to 1 , 0 , -1 (or any other level) is known as Quantization.

These are the two basics steps that are involved while converting an analog signal to a digital signal.

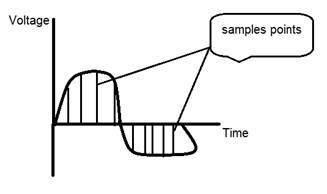

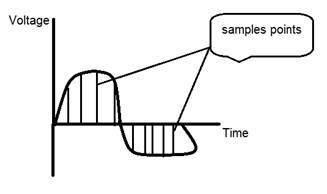

The quantization of a signal has been shown in the figure below.

Why do we need to convert an analog signal to digital signal.

The first and obvious reason is that digital image processing deals with digital images , that are digital signals. So when ever the image is captured , it is converted into digital format and then it is processed.

The second and important reason is , that in order to perform operations on an analog signal with a digital computer , you have to store that analog signal in the computer. And in order to store an analog signal , infinite memory is required to store it. And since thats not possible , so thats why we convert that signal into digital format and then store it in digital computer and then performs operations on it.

Continuous systems vs discrete systems

Continuous systems

The type of systems whose input and output both are continuous signals or analog signals are called continuous systems.

Discrete systems

The type of systems whose input and output both are discrete signals or digital signals are called digital systems

History of Photography

Origin of camera

The history of camera and photography is not exactly the same. The concepts of camera were introduced a lot before the concept of photography

Camera Obscura

The history of the camera lies in ASIA. The principles of the camera were first introduced by a Chinese philosopher MOZI. It is known as camera obscura. The cameras evolved from this principle.

The word camera obscura is evolved from two different words. Camera and Obscura. The meaning of the word camera is a room or some kind of vault and Obscura stands for dark.

The concept which was introduced by the Chinese philosopher consist of a device, that project an image of its surrounding on the wall. However it was not built by the Chinese.

The creation of camera obscura

The concept of Chinese was bring in reality by a Muslim scientist Abu Ali Al-Hassan Ibn al-Haitham commonly known as Ibn al-Haitham. He built the first camera obscura. His camera follows the principles of pinhole camera. He build this device in somewhere around 1000.

Portable camera

In 1685, a first portable camera was built by Johann Zahn. Before the advent of this device , the camera consist of a size of room and were not portable. Although a device was made by an Irish scientist Robert Boyle and Robert Hooke that was a transportable camera, but still that device was very huge to carry it from one place to the other.

Origin of photography

Although the camera obscura was built in 1000 by a Muslim scientist. But its first actual use was described in the 13th century by an English philosopher Roger Bacon. Roger suggested the use of camera for the observation of solar eclipses.

Da Vinci

Although much improvement has been made before the 15th century , but the improvements and the findings done by Leonardo di ser Piero da Vinci was remarkable. Da Vinci was a great artist , musician , anatomist , and a war enginner. He is credited for many inventions. His one of the most famous painting includes, the painting of Mona Lisa.

Da vinci not only built a camera obscura following the principle of a pin hole camera but also uses it as drawing aid for his art work. In his work , which was described in Codex Atlanticus , many principles of camera obscura has been defined.

His camera follows the principle of a pin hole camera which can be described as

When images of illuminated objects penetrate through a small hole into a very dark room you will see [on the opposite wall] these objects in their proper form and color, reduced in size in a reversed position, owing to the intersection of rays.

First photograph

The first photograph was taken in 1814 by a French inventor Joseph Nicephore Niepce. He captures the first photograph of a view from the window at Le Gras, by coating the pewter plate with bitumen and after that exposing that plate to light.

First underwater photograph

The first underwater photograph was taken by an English mathematician William Thomson using a water tight box. This was done in 1856.

The origin of film

The origin of film was introduced by an American inventor and a philanthropist known as George Eastman who is considered as the pioneer of photography.

He founded the company called as Eastman Kodak , which is famous for developing films. The company starts manufacturing paper film in 1885. He first created the camera Kodak and then later Brownie. Brownie was a box camera and gain its popularity due to its feature of Snapshot.

After the advent of the film , the camera industry once again got a boom and one invention lead to another.

Leica and Argus

Leica and argus are the two analog cameras developed in 1925 and in 1939 respectively. The camera Leica was built using a 35mm cine film.

Argus was another camera analog camera that uses the 35mm format and was rather inexpensive as compared by Leica and became very popular.

Analog CCTV cameras

In 1942 a German engineer Walter Bruch developed and installed the very first system of the analog CCTV cameras. He is also credited for the invention of color television in the 1960.

Photo Pac

The first disposable camera was introduced in 1949 by Photo Pac. The camera was only a one time use camera with a roll of film already included in it. The later versions of Photo pac were water proof and even have the flash.

Digital Cameras

Mavica by Sony

Mavica (the magnetic video camera) was launched by Sony in 1981 was the first game changer in digital camera world. The images were recorded on floppy disks and images can be viewed later on any monitor screen.

It was not a pure digital camera , but an analog camera. But got its popularity due to its storing capacity of images on a floppy disks. It means that you can now store images for a long lasting period , and you can save a huge number of pictures on the floppy which are replaced by the new blank disc , when they got full. Mavica has the capacity of storing 25 images on a disk.

One more important thing that mavica introduced was its 0.3 mega pixel capacity of capturing photos.

Digital Cameras

Fuji DS-1P camera by Fuji films 1988 was the first true digital camera

Nikon D1 was a 2.74 mega pixel camera and the first commercial digital SLR camera developed by Nikon , and was very much affordable by the professionals.

Today digital cameras are included in the mobile phones with very high resolution and quality.

Applications and Usage

Since digital image processing has very wide applications and almost all of the technical fields are impacted by DIP, we will just discuss some of the major applications of DIP.

Digital Image processing is not just limited to adjust the spatial resolution of the everyday images captured by the camera. It is not just limited to increase the brightness of the photo, e.t.c. Rather it is far more than that.

Electromagnetic waves can be thought of as stream of particles, where each particle is moving with the speed of light. Each particle contains a bundle of energy. This bundle of energy is called a photon.

The electromagnetic spectrum according to the energy of photon is shown below.

In this electromagnetic spectrum, we are only able to see the visible spectrum.

Visible spectrum mainly includes seven different colors that are commonly term as (VIBGOYR). VIBGOYR stands for violet , indigo , blue , green , orange , yellow and Red.

But that doesnot nullify the existence of other stuff in the spectrum. Our human eye can only see the visible portion, in which we saw all the objects. But a camera can see the other things that a naked eye is unable to see. For example: x rays , gamma rays , e.t.c. Hence the analysis of all that stuff too is done in digital image processing.

This discussion leads to another question which is

why do we need to analyze all that other stuff in EM spectrum too?

The answer to this question lies in the fact, because that other stuff such as XRay has been widely used in the field of medical. The analysis of Gamma ray is necessary because it is used widely in nuclear medicine and astronomical observation. Same goes with the rest of the things in EM spectrum.

Applications of Digital Image Processing

Some of the major fields in which digital image processing is widely used are mentioned below

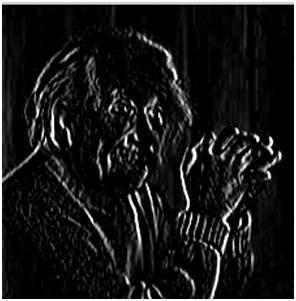

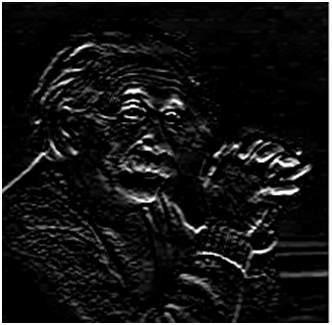

Image sharpening and restoration

Image sharpening and restoration refers here to process images that have been captured from the modern camera to make them a better image or to manipulate those images in way to achieve desired result. It refers to do what Photoshop usually does.

This includes Zooming, blurring , sharpening , gray scale to color conversion, detecting edges and vice versa , Image retrieval and Image recognition.

The common examples are:

The original image

The zoomed image

Blurr image

Sharp image

Edges

Medical field

The common applications of DIP in the field of medical is

Gamma ray imaging

PET scan

X Ray Imaging

Medical CT

UV imaging

UV imaging

In the field of remote sensing , the area of the earth is scanned by a satellite or from a very high ground and then it is analyzed to obtain information about it.

One particular application of digital image processing in the field of remote sensing is to detect infrastructure damages caused by an earthquake.

As it takes longer time to grasp damage, even if serious damages are focused on. Since the area effected by the earthquake is sometimes so wide , that it not possible to examine it with human eye in order to estimate damages. Even if it is , then it is very hectic and time consuming procedure. So a solution to this is found in digital image processing. An image of the effected area is captured from the above ground and then it is analyzed to detect the various types of damage done by the earthquake.

The key steps include in the analysis are

The extraction of edges

Analysis and enhancement of various types of edges

Transmission and encoding

The very first image that has been transmitted over the wire was from London to New York via a submarine cable. The picture that was sent is shown below.

The picture that was sent took three hours to reach from one place to another.

Now just imagine , that today we are able to see live video feed , or live cctv footage from one continent to another with just a delay of seconds. It means that a lot of work has been done in this field too.

This field doesnot only focus on transmission , but also on encoding. Many different formats have been developed for high or low bandwith to encode photos and then stream it over the internet or e.t.c.

Machine/Robot vision

Apart form the many challenges that a robot face today , one of the biggest challenge still is to increase the vision of the robot. Make robot able to see things , identify them , identify the hurdles e.t.c. Much work has been contributed by this field and a complete other field of computer vision has been introduced to work on it.

Hurdle detection

Hurdle detection is one of the common task that has been done through image processing, by identifying different type of objects in the image and then calculating the distance between robot and hurdles.

Line follower robot

Most of the robots today work by following the line and thus are called line follower robots. This help a robot to move on its path and perform some tasks. This has also been achieved through image processing.

Color processing

Color processing includes processing of colored images and different color spaces that are used. For example RGB color model , YCbCr, HSV. It also involves studying transmission , storage , and encoding of these color images.

Pattern recognition

Pattern recognition involves study from image processing and from various other fields that includes machine learning ( a branch of artificial intelligence). In pattern recognition , image processing is used for identifying the objects in an images and then machine learning is used to train the system for the change in pattern. Pattern recognition is used in computer aided diagnosis , recognition of handwriting , recognition of images e.t.c

Video processing

A video is nothing but just the very fast movement of pictures. The quality of the video depends on the number of frames/pictures per minute and the quality of each frame being used. Video processing involves noise reduction , detail enhancement , motion detection , frame rate conversion , aspect ratio conversion , color space conversion e.t.c.

Concept of Dimensions

We will look at this example in order to understand the concept of dimension.

Consider you have a friend who lives on moon, and he wants to send you a gift on your birthday present. He ask you about your residence on earth. The only problem is that the courier service on moon doesnot understand the alphabetical address, rather it only understand the numerical co-ordinates. So how do you send him your position on earth?

Thats where comes the concept of dimensions. Dimensions define the minimum number of points required to point a position of any particular object within a space.

So lets go back to our example again in which you have to send your position on earth to your friend on moon. You send him three pair of co-ordinates. The first one is called longitude , the second one is called latitude, and the third one is called altitude.

These three co-ordinates define your position on the earth. The first two defines your location , and the third one defines your height above the sea level.

So that means that only three co-ordinates are required to define your position on earth. That means you live in world which is 3 dimensional. And thus this not only answers the question about dimension , but also answers the reason , that why we live in a 3d world.

Since we are studying this concept in reference to the digital image processing, so we are now going to relate this concept of dimension with an image.

Dimensions of image

So if we live in the 3d world , means a 3 dimensional world, then what are the dimensions of an image that we capture.

An image is a two dimensional, thats why we also define an image as a 2 dimensional signal. An image has only height and width. An image doesnot have depth. Just have a look at this image below.

If you would look at the above figure , it shows that it has only two axis which are the height and width axis. You cannot perceive depth from this image. Thats why we say that an image is two dimensional signal. But our eye is able to perceive three dimensional objects , but this would be more explained in the next tutorial of how the camera works , and image is perceived.

This discussion leads to some other questions that how 3 dimension systems is formed from 2 dimension.

How does television works?

If we look the image above , we will see that it is a two dimensional image. In order to convert it into three dimension , we need one other dimension.

Lets take time as the third dimension , in that case we will move this two dimensional image over the third dimension time. The same concept that happens in television, that helps us perceive the depth of different objects on a screen. Does that mean that what comes on the T.V or what we see in the television screen is 3d. Well we can yes.

The reason is that, in case of T.V we if we are playing a video. Then a video is nothing else but two dimensional pictures move over time dimension. As two dimensional objects are moving over the third dimension which is a time so we can say it is 3 dimensional.

Different dimensions of signals

1 dimension signal

The common example of a 1 dimension signal is a waveform. It can be mathematically represented as

F(x) = waveform

Where x is an independent variable. Since it is a one dimension signal , so thats why there is only one variable x is used.

Pictorial representation of a one dimensional signal is given below:

The above figure shows a one dimensional signal.

Now this lead to another question, which is, even though it is a one dimensional signal ,then why does it have two axis?. The answer to this question is that even though it is a one dimensional signal , but we are drawing it in a two dimensional space. Or we can say that the space in which we are representing this signal is two dimensional. Thats why it looks like a two dimensional signal.

Perhaps you can understand the concept of one dimension more better by looking at the figure below.

Now refer back to our initial discussion on dimension, Consider the above figure a real line with positive numbers from one point to the other. Now if we have to explain the location of any point on this line, we just need only one number, which means only one dimension.

2 dimensions signal

The common example of a two dimensional signal is an image , which has already been discussed above.

As we have already seen that an image is two dimensional signal, i-e: it has two dimensions. It can be mathematically represented as:

F (x , y) = Image

Where x and y are two variables. The concept of two dimension can also be explained in terms of mathematics as:

Now in the above figure, label the four corners of the square as A,B,C and D respectively. If we call , one line segment in the figure AB and the other CD , then we can see that these two parallel segments join up and make a square. Each line segment corresponds to one dimension , so these two line segments correspond to 2 dimensions.

3 dimension signal

Three dimensional signal as it names refers to those signals which has three dimensions. The most common example has been discussed in the beginning which is of our world. We live in a three dimensional world. This example has been discussed very elaborately.

Another example of a three dimensional signal is a cube or a volumetric data or the most common example would be animated or 3d cartoon character.

The mathematical representation of three dimensional signal is:

F(x,y,z) = animated character.

Another axis or dimension Z is involved in a three dimension, that gives the illusion of depth. In a Cartesian co-ordinate system it can be viewed as:

4 dimension signal

In a four dimensional signal , four dimensions are involved. The first three are the same as of three dimensional signal which are: (X, Y, Z), and the fourth one which is added to them is T(time). Time is often referred to as temporal dimension which is a way to measure change.

Mathematically a four d signal can be stated as:

F(x,y,z,t) = animated movie.

The common example of a 4 dimensional signal can be an animated 3d movie. As each character is a 3d character and then they are moved with respect to the time, due to which we saw an illusion of a three dimensional movie more like a real world.

So that means that in reality the animated movies are 4 dimensional i-e: movement of 3d characters over the fourth dimension time.

Image Formation on Camera

How human eye works?

Before we discuss , the image formation on analog and digital cameras , we have to first discuss the image formation on human eye. Because the basic principle that is followed by the cameras has been taken from the way , the human eye works.

When light falls upon the particular object , it is reflected back after striking through the object. The rays of light when passed through the lens of eye , form a particular angle , and the image is formed on the retina which is the back side of the wall. The image that is formed is inverted. This image is then interpreted by the brain and that makes us able to understand things. Due to angle formation , we are able to perceive the height and depth of the object we are seeing. This has been more explained in the tutorial of perspective transformation.

As you can see in the above figure, that when sun light falls on the object (in this case the object is a face), it is reflected back and different rays form different angle when they are passed through the lens and an invert image of the object has been formed on the back wall. The last portion of the figure denotes that the object has been interpreted by the brain and re-inverted.

Now lets take our discussion back to the image formation on analog and digital cameras.

Image formation on analog cameras

In analog cameras , the image formation is due to the chemical reaction that takes place on the strip that is used for image formation.

A 35mm strip is used in analog camera. It is denoted in the figure by 35mm film cartridge. This strip is coated with silver halide ( a chemical substance).

A 35mm strip is used in analog camera. It is denoted in the figure by 35mm film cartridge. This strip is coated with silver halide ( a chemical substance).

Light is nothing but just the small particles known as photon particles.So when these photon particles are passed through the camera, it reacts with the silver halide particles on the strip and it results in the silver which is the negative of the image.

In order to understand it better , have a look at this equation.

Photons (light particles) + silver halide ? silver ? image negative.

This is just the basics, although image formation involves many other concepts regarding the passing of light inside , and the concepts of shutter and shutter speed and aperture and its opening but for now we will move on to the next part. Although most of these concepts have been discussed in our tutorial of shutter and aperture.

This is just the basics, although image formation involves many other concepts regarding the passing of light inside , and the concepts of shutter and shutter speed and aperture and its opening but for now we will move on to the next part. Although most of these concepts have been discussed in our tutorial of shutter and aperture.

Image formation on digital cameras

In the digital cameras , the image formation is not due to the chemical reaction that take place , rather it is a bit more complex then this. In the digital camera , a CCD array of sensors is used for the image formation.

Image formation through CCD array

CCD stands for charge-coupled device. It is an image sensor, and like other sensors it senses the values and converts them into an electric signal. In case of CCD it senses the image and convert it into electric signal e.t.c.

This CCD is actually in the shape of array or a rectangular grid. It is like a matrix with each cell in the matrix contains a censor that senses the intensity of photon.

Like analog cameras , in the case of digital too , when light falls on the object , the light reflects back after striking the object and allowed to enter inside the camera.

Each sensor of the CCD array itself is an analog sensor. When photons of light strike on the chip , it is held as a small electrical charge in each photo sensor. The response of each sensor is directly equal to the amount of light or (photon) energy striked on the surface of the sensor.

Since we have already define an image as a two dimensional signal and due to the two dimensional formation of the CCD array , a complete image can be achieved from this CCD array.

It has limited number of sensors , and it means a limited detail can be captured by it. Also each sensor can have only one value against the each photon particle that strike on it.

So the number of photons striking(current) are counted and stored. In order to measure accurately these , external CMOS sensors are also attached with CCD array.

Introduction to pixel

The value of each sensor of the CCD array refers to each the value of the individual pixel. The number of sensors = number of pixels. It also means that each sensor could have only one and only one value.

Storing image

The charges stored by the CCD array are converted to voltage one pixel at a time. With the help of additional circuits , this voltage is converted into a digital information and then it is stored.

Each company that manufactures digital camera, make their own CCD sensors. That include , Sony , Mistubishi , Nikon ,Samsung , Toshiba , FujiFilm , Canon e.t.c.

Apart from the other factors , the quality of the image captured also depends on the type and quality of the CCD array that has been used.

Camera Mechansim

In this tutorial, we will discuss some of the basic camera concepts, like aperture , shutter , shutter speed , ISO and we will discuss the collective use of these concepts to capture a good image.

Aperture

Aperture is a small opening which allows the light to travel inside into camera. Here is the picture of aperture.

You will see some small blades like stuff inside the aperture. These blades create a octagonal shape that can be opened closed. And thus it make sense that , the more blades will open, the hole from which the light would have to pass would be bigger. The bigger the hole , the more light is allowed to enter.

Effect

The effect of the aperture directly corresponds to brightness and darkness of an image. If the aperture opening is wide , it would allow more light to pass into the camera. More light would result in more photons, which ultimately result in a brighter image.

The example of this is shown below

Consider these two photos

The one on the right side looks brighter, it means that when it was captured by the camera , the aperture was wide open. As compare to the other picture on the left side , which is very dark as compare to the first one, that shows that when that image was captured, its aperture was not wide open.

Size

Now lets discuss the maths behind the aperture. The size of the aperture is denoted by a f value. And it is inversely proportional to the opening of aperture.

Here are the two equations , that best explain this concept.

Large aperture size = Small f value

Small aperture size = Greater f value

Pictorially it can be represented as:

Shutter

After the aperture , there comes the shutter. The light when allowed to pass from the aperture , falls directly on to the shutter. Shutter is actually a cover, a closed window , or can be thought of as a curtain. Remember when we talk about the CCD array sensor on which the image is formed. Well behind the shutter is the sensor. So shutter is the only thing that is between the image formation and the light , when it is passed from aperture.

As soon as the shutter is open , light falls on the image sensor , and the image is formed on the array.

Effect

If the shutter allows light to pass a bit longer , the image would be brighter. Similarly a darker picture is produced , when a shutter is allowed to move very quickly and hence, the light that is allowed to pass has very less photons , and the image that is formed on the CCD array sensor is very dark.

Shutter has further two main concepts:

Shutter Speed

Shutter time

Shutter speed

The shutter speed can be referred to as the number of times the shutter get open or close. Remember we are not talking about for how long the shutter get open or close.

Shutter time

The shutter time can be defined as

When the shutter is open , then the amount of wait time it take till it is closed is called shutter time.

In this case we are not talking about how many times , the shutter got open or close , but we are talking about for how much time does it remain wide open.

For example:

We can better understand these two concepts in this way. That lets say that a shutter opens 15 times and then get closed, and for each time it opens for 1 second and then get closed. In this example , 15 is the shutter speed and 1 second is the shutter time.

Relationship

The relationship between shutter speed and shutter time is that they are both inversely proportional to each other.

This relationship can be defined in the equation below.

More shutter speed = less shutter time

Less shutter speed = more shutter time.

Explanation:

The lesser the time required , the more is the speed. And the greater the time required , the less is the speed.

Applications

These two concepts together make a variety of applications. Some of them are given below.

Fast moving objects:

If you were to capture the image of a fast moving object , could be a car or anything. The adjustment of shutter speed and its time would effect a lot.

So , in order to capture an image like this, we will make two amendments:

Increase shutter speed

Decrease shutter time

What happens is , that when we increase shutter speed , the more number of times , the shutter would open or close. It means different samples of light would allow to pass in. And when we decrease shutter time , it means we will immediately captures the scene, and close the shutter gate.

If you will do this , you get a crisp image of a fast moving object.

In order to understand it , we will look at this example. Suppose you want to capture the image of fast moving water fall.

You set your shutter speed to 1 second and you capture a photo. This is what you get

Then you set your shutter speed to a faster speed and you get.

Then again you set your shutter speed to even more faster and you get.

You can see in the last picture , that we have increase our shutter speed to very fast, that means that a shutter get opened or closed in 200th of 1 second and so we got a crisp image.

ISO

ISO factor is measured in numbers. It denotes the sensitivity of light to camera. If ISO number is lowered , it means our camera is less sensitive to light and if the ISO number is high, it means it is more senstivie.

Effect

The higher is the ISO , the more brighter the picture would be. IF ISO is set to 1600 , the picture would be very brighter and vice versa.

Side effect

If the ISO increases, the noise in the image also increases. Today most of the camera manufacturing companies are working on removing the noise from the image when ISO is set to higher speed.

Concept of Pixel

Pixel

Pixel is the smallest element of an image. Each pixel correspond to any one value. In an 8-bit gray scale image, the value of the pixel between 0 and 255. The value of a pixel at any point correspond to the intensity of the light photons striking at that point. Each pixel store a value proportional to the light intensity at that particular location.

PEL

A pixel is also known as PEL. You can have more understanding of the pixel from the pictures given below.

In the above picture, there may be thousands of pixels, that together make up this image. We will zoom that image to the extent that we are able to see some pixels division. It is shown in the image below.

In the above picture, there may be thousands of pixels, that together make up this image. We will zoom that image to the extent that we are able to see some pixels division. It is shown in the image below.

Relation ship with CCD array

We have seen that how an image is formed in the CCD array. So a pixel can also be defined as

The smallest division the CCD array is also known as pixel.

Each division of CCD array contains the value against the intensity of the photon striking to it. This value can also be called as a pixel

Calculation of total number of pixels

We have define an image as a two dimensional signal or matrix. Then in that case the number of PEL would be equal to the number of rows multiply with number of columns.

This can be mathematically represented as below:

Total number of pixels = number of rows ( X ) number of columns

Or we can say that the number of (x,y) coordinate pairs make up the total number of pixels.

We will look in more detail in the tutorial of image types , that how do we calculate the pixels in a color image.

Gray level

The value of the pixel at any point denotes the intensity of image at that location , and that is also known as gray level.

We will see in more detail about the value of the pixels in the image storage and bits per pixel tutorial, but for now we will just look at the concept of only one pixel value.

Pixel value.(0)

As it has already been define in the beginning of this tutorial , that each pixel can have only one value and each value denotes the intensity of light at that point of the image.

We will now look at a very unique value 0. The value 0 means absence of light. It means that 0 denotes dark, and it further means that when ever a pixel has a value of 0, it means at that point , black color would be formed.

Have a look at this image matrix

Now this image matrix has all filled up with 0. All the pixels have a value of 0. If we were to calculate the total number of pixels form this matrix , this is how we are going to do it.

Total no of pixels = total no. of rows X total no. of columns

= 3 X 3

= 9.

It means that an image would be formed with 9 pixels, and that image would have a dimension of 3 rows and 3 column and most importantly that image would be black.

The resulting image that would be made would be something like this

Now why is this image all black. Because all the pixels in the image had a value of 0.

Perspective Transformation

When human eyes see near things they look bigger as compare to those who are far away. This is called perspective in a general way. Whereas transformation is the transfer of an object e.t.c from one state to another.

So overall , the perspective transformation deals with the conversion of 3d world into 2d image. The same principle on which human vision works and the same principle on which the camera works.

We will see in detail about why this happens , that those objects which are near to you look bigger , while those who are far away , look smaller even though they look bigger when you reach them.

We will start this discussion by the concept of frame of reference:

Frame of reference:

Frame of reference is basically a set of values in relation to which we measure something.

5 frames of reference

In order to analyze a 3d world/image/scene, 5 different frame of references are required.

Object

World

Camera

Image

Pixel

Object coordinate frame

Object coordinate frame is used for modeling objects. For example , checking if a particular object is in a proper place with respect to the other object. It is a 3d coordinate system.

World coordinate frame

World coordinate frame is used for co-relating objects in a 3 dimensional world. It is a 3d coordinate system.

Camera coordinate frame

Camera co-ordinate frame is used to relate objects with respect of the camera. It is a 3d coordinate system.

Image coordinate frame

It is not a 3d coordinate system , rather it is a 2d system. It is used to describe how 3d points are mapped in a 2d image plane.

Pixel coordinate frame

It is also a 2d coordinate system. Each pixel has a value of pixel co ordinates.

Transformation between these 5 frames

Thats how a 3d scene is transformed into 2d , with image of pixels.

Now we will explain this concept mathematically.

Where

Y = 3d object

y = 2d Image

f = focal length of the camera

Z = distance between image and the camera

Now there are two different angles formed in this transform which are represented by Q.

The first angle is

Where minus denotes that image is inverted. The second angle that is formed is:

Comparing these two equations we get

From this equation, we can see that when the rays of light reflect back after striking from the object , passed from the camera , an invert image is formed.

We can better understand this, with this example.

For example

Calculating the size of image formed

Suppose an image has been taken of a person 5m tall, and standing at a distance of 50m from the camera, and we have to tell that what is the size of the image of the person , with a camera of focal length is 50mm.

Solution:

Since the focal length is in millimeter , so we have to convert every thing in millimeter in order to calculate it.

So,

Y = 5000 mm.

f = 50 mm.

Z = 50000 mm.

Putting the values in the formula , we get

= -5 mm.

Again, the minus sign indicates that the image is inverted.

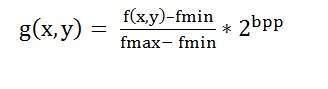

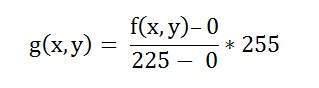

Concept of Bits Per Pixel

Bpp or bits per pixel denotes the number of bits per pixel. The number of different colors in an image is depends on the depth of color or bits per pixel.

Bits in mathematics:

Its just like playing with binary bits.

How many numbers can be represented by one bit.

0

1

How many two bits combinations can be made.

00

01

10

11

If we devise a formula for the calculation of total number of combinations that can be made from bit , it would be like this.

Where bpp denotes bits per pixel. Put 1 in the formula you get 2, put 2 in the formula , you get 4. It grows exponentionally.

Number of different colors:

Now as we said it in the beginning , that the number of different colors depend on the number of bits per pixel.

The table for some of the bits and their color is given below.

| Bits per pixel | Number of colors |

|---|

| 1 bpp | 2 colors |

| 2 bpp | 4 colors |

| 3 bpp | 8 colors |

| 4 bpp | 16 colors |

| 5 bpp | 32 colors |

| 6 bpp | 64 colors |

| 7 bpp | 128 colors |

| 8 bpp | 256 colors |

| 10 bpp | 1024 colors |

| 16 bpp | 65536 colors |

| 24 bpp | 16777216 colors (16.7 million colors) |

| 32 bpp | 4294967296 colors (4294 million colors) |

This table shows different bits per pixel and the amount of color they contain.

Shades

You can easily notice the pattern of the exponentional growth. The famous gray scale image is of 8 bpp , means it has 256 different colors in it or 256 shades.

Shades can be represented as:

Color images are usually of the 24 bpp format , or 16 bpp.

We will see more about other color formats and image types in the tutorial of image types.

Color values:

We have previously seen in the tutorial of concept of pixel , that 0 pixel value denotes black color.

Black color:

Remember , 0 pixel value always denotes black color. But there is no fixed value that denotes white color.

White color:

The value that denotes white color can be calculated as :

In case of 1 bpp , 0 denotes black , and 1 denotes white.

In case 8 bpp , 0 denotes black , and 255 denotes white.

Gray color:

When you calculate the black and white color value , then you can calculate the pixel value of gray color.

Gray color is actually the mid point of black and white. That said,

In case of 8bpp, the pixel value that denotes gray color is 127 or 128bpp (if you count from 1, not from 0).

Image storage requirements

After the discussion of bits per pixel , now we have every thing that we need to calculate a size of an image.

Image size

The size of an image depends upon three things.

Number of rows

Number of columns

Number of bits per pixel

The formula for calculating the size is given below.

Size of an image = rows * cols * bpp

It means that if you have an image, lets say this one:

Assuming it has 1024 rows and it has 1024 columns. And since it is a gray scale image , it has 256 different shades of gray or it has bits per pixel. Then putting these values in the formula , we get

Size of an image = rows * cols * bpp

= 1024 * 1024 * 8

= 8388608 bits.

But since its not a standard answer that we recognize , so will convert it into our format.

Converting it into bytes = 8388608 / 8 = 1048576 bytes.

Converting into kilo bytes = 1048576 / 1024 = 1024kb.

Converting into Mega bytes = 1024 / 1024 = 1 Mb.

Thats how an image size is calculated and it is stored. Now in the formula , if you are given the size of image and the bits per pixel , you can also calculate the rows and columns of the image , provided the image is square(same rows and same column).

Types of Images

There are many type of images , and we will look in detail about different types of images , and the color distribution in them.

The binary image

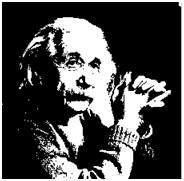

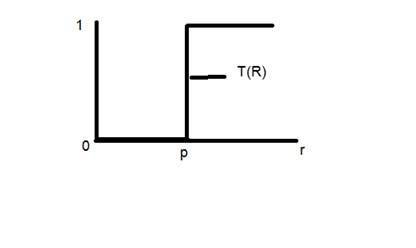

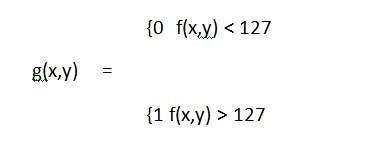

The binary image as it name states , contain only two pixel values.

0 and 1.

In our previous tutorial of bits per pixel , we have explained this in detail about the representation of pixel values to their respective colors.

Here 0 refers to black color and 1 refers to white color. It is also known as Monochrome.

Black and white image:

The resulting image that is formed hence consist of only black and white color and thus can also be called as Black and White image.

No gray level

One of the interesting this about this binary image that there is no gray level in it. Only two colors that are black and white are found in it.

Format

Binary images have a format of PBM ( Portable bit map )

2 , 3 , 4 ,5 ,6 bit color format

The images with a color format of 2 , 3 , 4 ,5 and 6 bit are not widely used today. They were used in old times for old TV displays , or monitor displays.

But each of these colors have more then two gray levels , and hence has gray color unlike the binary image.

In a 2 bit 4, in a 3 bit 8 , in a 4 bit 16, in a 5 bit 32, in a 6 bit 64 different colors are present.

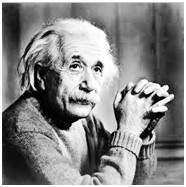

8 bit color format

8 bit color format is one of the most famous image format. It has 256 different shades of colors in it. It is commonly known as Grayscale image.

The range of the colors in 8 bit vary from 0-255. Where 0 stands for black , and 255 stands for white , and 127 stands for gray color.

This format was used initially by early models of the operating systems UNIX and the early color Macintoshes.

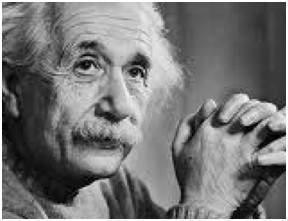

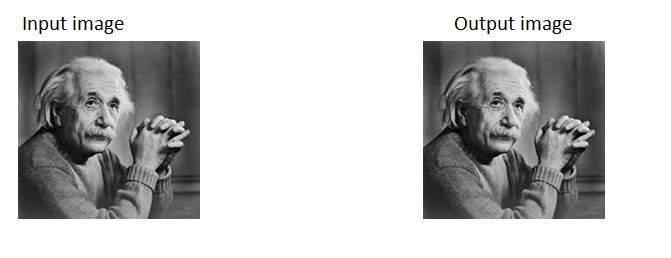

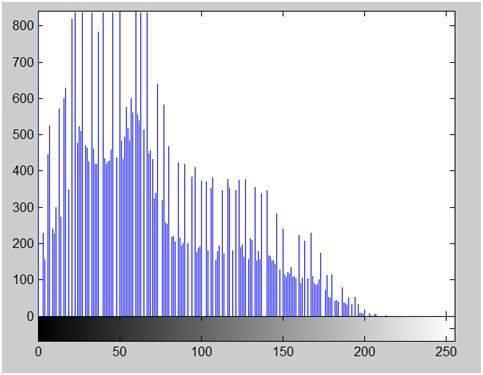

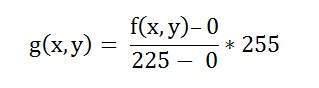

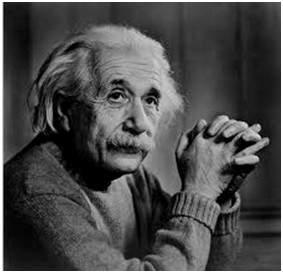

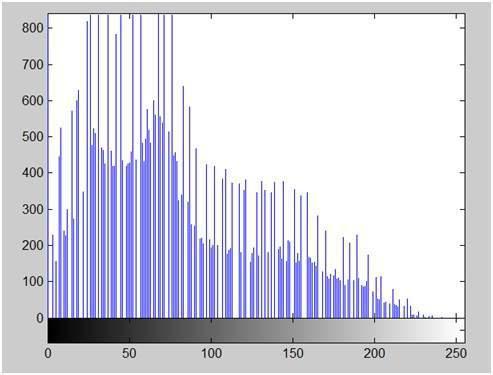

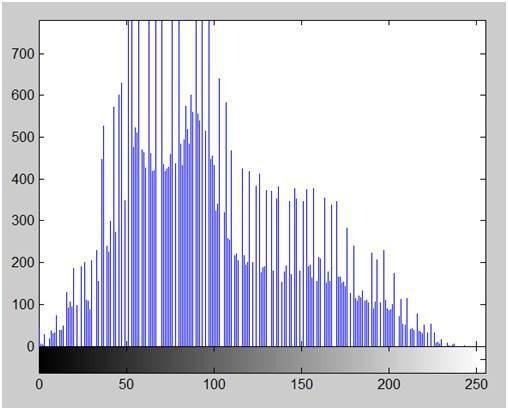

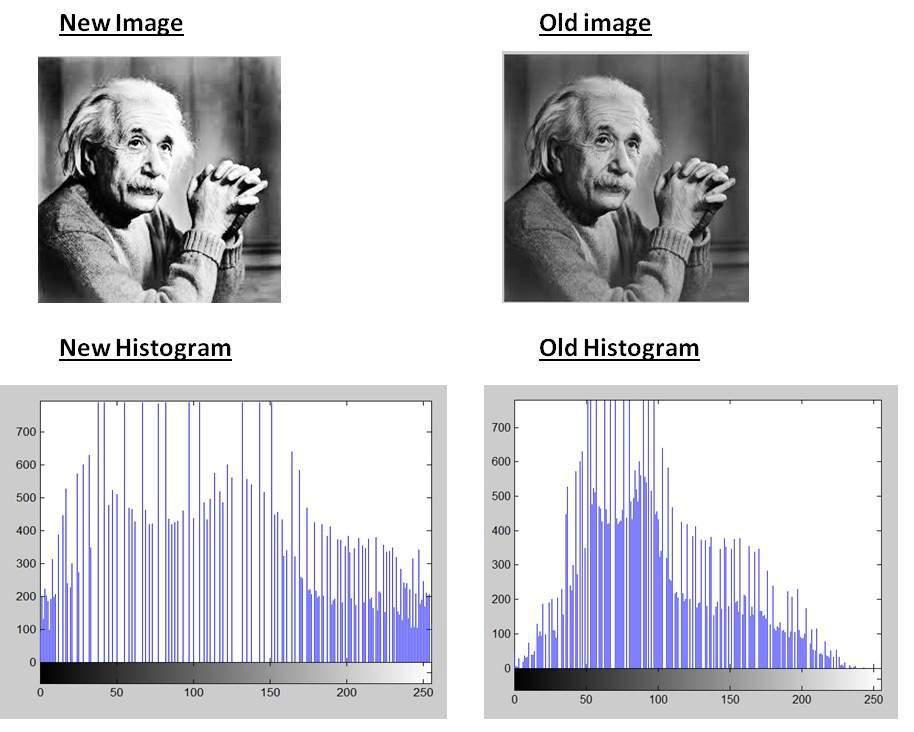

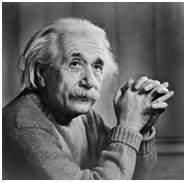

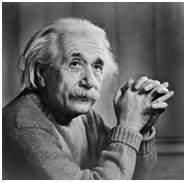

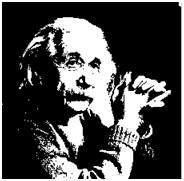

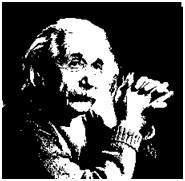

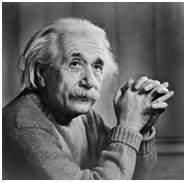

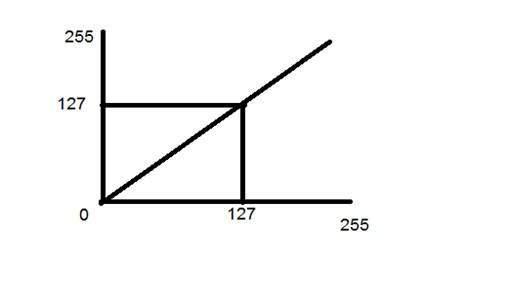

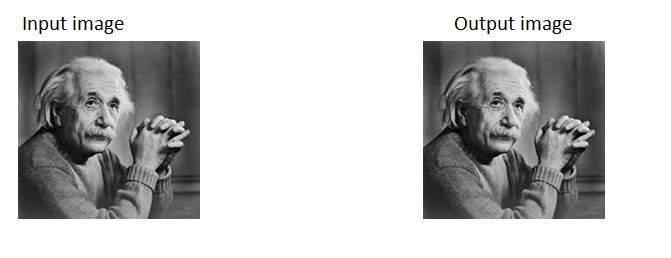

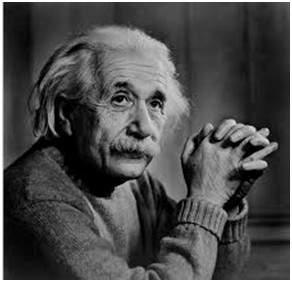

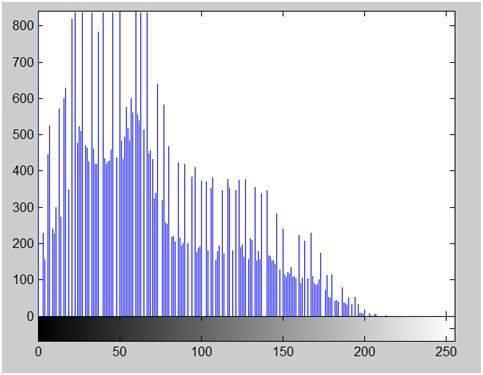

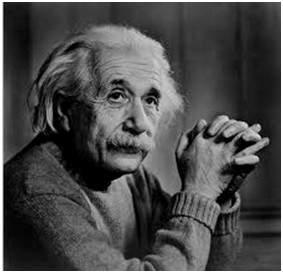

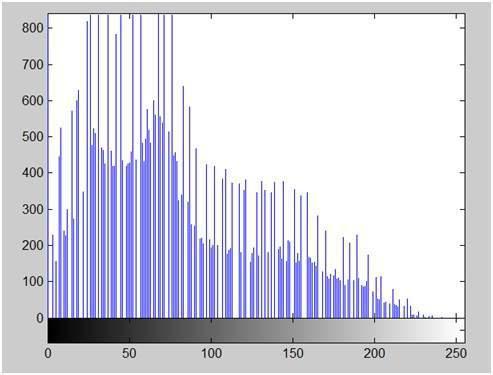

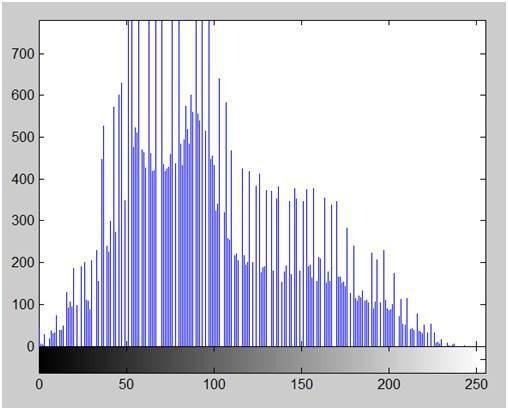

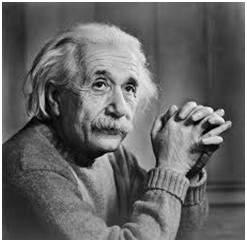

A grayscale image of Einstein is shown below:

Format

The format of these images are PGM ( Portable Gray Map ).

This format is not supported by default from windows. In order to see gray scale image , you need to have an image viewer or image processing toolbox such as Matlab.

Behind gray scale image:

As we have explained it several times in the previous tutorials , that an image is nothing but a two dimensional function , and can be represented by a two dimensional array or matrix. So in the case of the image of Einstein shown above , there would be two dimensional matrix in behind with values ranging between 0 and 255.

But thats not the case with the color images.

16 bit color format

It is a color image format. It has 65,536 different colors in it. It is also known as High color format.

It has been used by Microsoft in their systems that support more then 8 bit color format. Now in this 16 bit format and the next format we are going to discuss which is a 24 bit format are both color format.

The distribution of color in a color image is not as simple as it was in grayscale image.

A 16 bit format is actually divided into three further formats which are Red , Green and Blue. The famous (RGB) format.

It is pictorially represented in the image below.

Now the question arises , that how would you distribute 16 into three. If you do it like this,

5 bits for R , 5 bits for G , 5 bits for B

Then there is one bit remains in the end.

So the distribution of 16 bit has been done like this.

5 bits for R , 6 bits for G , 5 bits for B.

The additional bit that was left behind is added into the green bit. Because green is the color which is most soothing to eyes in all of these three colors.

Note this is distribution is not followed by all the systems. Some have introduced an alpha channel in the 16 bit.

Another distribution of 16 bit format is like this:

4 bits for R , 4 bits for G , 4 bits for B , 4 bits for alpha channel.

Or some distribute it like this

5 bits for R , 5 bits for G , 5 bits for B , 1 bits for alpha channel.

24 bit color format

24 bit color format also known as true color format. Like 16 bit color format , in a 24 bit color format , the 24 bits are again distributed in three different formats of Red , Green and Blue.

Since 24 is equally divided on 8 , so it has been distributed equally between three different color channels.

Their distribution is like this.

8 bits for R , 8 bits for G , 8 bits for B.

Behind a 24 bit image.

Unlike a 8 bit gray scale image , which has one matrix behind it, a 24 bit image has three different matrices of R , G , B.

Format

It is the most common used format. Its format is PPM ( Portable pixMap) which is supported by Linux operating system. The famous windows has its own format for it which is BMP ( Bitmap ).

Color Codes Conversion

In this tutorial , we will see that how different color codes can be combined to make other colors, and how we can covert RGB color codes to hex and vice versa.

Different color codes

All the colors here are of the 24 bit format, that means each color has 8 bits of red , 8 bits of green , 8 bits of blue , in it. Or we can say each color has three different portions. You just have to change the quantity of these three portions to make any color.

Binary color format

Color:Black

Image:

Decimal Code:

(0,0,0)

Explanation:

As it has been explained in the previous tutorials , that in an 8-bit format , 0 refers to black. So if we have to make a pure black color , we have to make all the three portion of R , G , B to 0.

Color:White

Image:

Decimal Code:

(255,255,255)

Explanation:

Since each portion of R,G,B is an 8 bit portion. So in 8-bit , the white color is formed by 255. It is explained in the tutorial of pixel. So in order to make a white color we set each portion to 255 and thats how we got a white color. By setting each of the value to 255 , we get overall value of 255 , thats make the color white.

RGB color model:

Color:Red

Image:

Decimal Code:

(255,0,0)

Explanation:

Since we need only red color , so we zero out the rest of the two portions which are green and blue , and we set the red portion to its maximum which is 255.

Color:Green

Image:

Decimal Code:

(0,255,0)

Explanation:

Since we need only green color , so we zero out the rest of the two portions which are red and blue , and we set the green portion to its maximum which is 255.

Color: Blue

Image:

Decimal Code:

(0,0,255)

Explanation:

Since we need only blue color , so we zero out the rest of the two portions which are red and green , and we set the blue portion to its maximum which is 255

Gray color:

Color: Gray

Image:

Decimal Code:

(128,128,128)

Explanation:

As we have already defined in our tutorial of pixel , that gray color Is actually the mid point. In an 8-bit format , the mid point is 128 or 127. In this case we choose 128. So we set each of the portion to its mid point which is 128 , and that results in overall mid value and we got gray color.

CMYK color model:

CMYK is another color model where c stands for cyan , m stands for magenta , y stands for yellow , and k for black. CMYK model is commonly used in color printers in which there are two carters of color is used. One consist of CMY and other consist of black color.

The colors of CMY can also made from changing the quantity or portion of red , green and blue.

Color: Cyan

Image:

Decimal Code:

(0,255,255)

Explanation:

Cyan color is formed from the combination of two different colors which are Green and blue. So we set those two to maximum and we zero out the portion of red. And we get cyan color.

Color: Magenta

Image:

Decimal Code:

(255,0,255)

Explanation:

Magenta color is formed from the combination of two different colors which are Red and Blue. So we set those two to maximum and we zero out the portion of green. And we get magenta color.

Color: Yellow

Image:

Decimal Code:

(255,255,0)

Explanation:

Yellow color is formed from the combination of two different colors which are Red and Green. So we set those two to maximum and we zero out the portion of blue. And we get yellow color.

Conversion

Now we will see that how color are converted are from one format to another.

Conversion from RGB to Hex code:

Conversion from Hex to rgb is done through this method:

Take a color. E.g: White = (255, 255 , 255).

Take the first portion e.g 255.

Divide it by 16. Like this:

Take the two numbers below line , the factor , and the remainder. In this case it is 15 � 15 which is FF.

Repeat the step 2 for the next two portions.

Combine all the hex code into one.

Answer: #FFFFFF

Conversion from Hex to RGB:

Conversion from hex code to rgb decimal format is done in this way.

Take a hex number. E.g: #FFFFFF

Break this number into 3 parts: FF FF FF

Take the first part and separate its components: F F

Convert each of the part separately into binary: (1111) ( 1111)

Now combine the individual binaries into one: 11111111

Convert this binary into decimal: 255

Now repeat step 2 , two more times.

The value comes in the first step is R , second one is G, and the third one belongs to B.

Answer: ( 255 , 255 , 255 )

Common colors and their Hex code has been given in this table.

| Color | Hex Code |

|---|

| Black | #000000 |

| White | #FFFFFF |

| Gray | #808080 |

| Red | #FF0000 |

| Green | #00FF00 |

| Blue | #0000FF |

| Cyan | #00FFFF |

| Magenta | #FF00FF |

| Yellow | #FFFF00 |

Grayscael to RGB Conversion

We have already define the RGB color model and gray scale format in our tutorial of Image types. Now we will convert an color image into a grayscale image.

There are two methods to convert it. Both has their own merits and demerits. The methods are:

Average method

Average method is the most simple one. You just have to take the average of three colors. Since its an RGB image , so it means that you have add r with g with b and then divide it by 3 to get your desired grayscale image.

Its done in this way.

Grayscale = (R + G + B) / 3

For example:

If you have an color image like the image shown above and you want to convert it into grayscale using average method. The following result would appear.

Explanation

There is one thing to be sure , that something happens to the original works. It means that our average method works. But the results were not as expected. We wanted to convert the image into a grayscale , but this turned out to be a rather black image.

Problem

This problem arise due to the fact , that we take average of the three colors. Since the three different colors have three different wavelength and have their own contribution in the formation of image , so we have to take average according to their contribution , not done it averagely using average method. Right now what we are doing is this,

33% of Red, 33% of Green, 33% of Blue

We are taking 33% of each, that means , each of the portion has same contribution in the image. But in reality thats not the case. The solution to this has been given by luminosity method.

Weighted method or luminosity method

You have seen the problem that occur in the average method. Weighted method has a solution to that problem. Since red color has more wavelength of all the three colors , and green is the color that has not only less wavelength then red color but also green is the color that gives more soothing effect to the eyes.

It means that we have to decrease the contribution of red color , and increase the contribution of the green color , and put blue color contribution in between these two.

So the new equation that form is:

New grayscale image = ( (0.3 * R) + (0.59 * G) + (0.11 * B) ).

According to this equation , Red has contribute 30% , Green has contributed 59% which is greater in all three colors and Blue has contributed 11%.

Applying this equation to the image, we get this

Original Image:

Grayscale Image:

Explanation

As you can see here , that the image has now been properly converted to grayscale using weighted method. As compare to the result of average method , this image is more brighter.

Concept of Sampling

Conversion of analog signal to digital signal:

The output of most of the image sensors is an analog signal, and we can not apply digital processing on it because we can not store it. We can not store it because it requires infinite memory to store a signal that can have infinite values.

So we have to convert an analog signal into a digital signal.

To create an image which is digital , we need to covert continuous data into digital form. There are two steps in which it is done.

We will discuss sampling now , and quantization will be discussed later on but for now on we will discuss just a little about the difference between these two and the need of these two steps.

Basic idea:

The basic idea behind converting an analog signal to its digital signal is

to convert both of its axis (x,y) into a digital format.

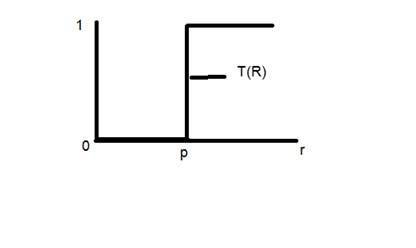

Since an image is continuous not just in its co-ordinates (x axis) , but also in its amplitude (y axis), so the part that deals with the digitizing of co-ordinates is known as sampling. And the part that deals with digitizing the amplitude is known as quantization.

Sampling.

Sampling has already been introduced in our tutorial of introduction to signals and system. But we are going to discuss here more.

Here what we have discussed of the sampling.

The term sampling refers to take samples

We digitize x axis in sampling

It is done on independent variable

In case of equation y = sin(x), it is done on x variable

It is further divided into two parts , up sampling and down sampling

If you will look at the above figure , you will see that there are some random variations in the signal. These variations are due to noise. In sampling we reduce this noise by taking samples. It is obvious that more samples we take , the quality of the image would be more better, the noise would be more removed and same happens vice versa.

However , if you take sampling on the x axis , the signal is not converted to digital format , unless you take sampling of the y-axis too which is known as quantization. The more samples eventually means you are collecting more data, and in case of image , it means more pixels.

Relation ship with pixels

Since a pixel is a smallest element in an image. The total number of pixels in an image can be calculated as

Pixels = total no of rows * total no of columns.

Lets say we have total of 25 pixels , that means we have a square image of 5 X 5. Then as we have dicussed above in sampling , that more samples eventually result in more pixels. So it means that of our continuous signal , we have taken 25 samples on x axis. That refers to 25 pixels of this image.

This leads to another conclusion that since pixel is also the smallest division of a CCD array. So it means it has a relationship with CCD array too , which can be explained as this.

Relationship with CCD array

The number of sensors on a CCD array is directly equal to the number of pixels. And since we have concluded that the number of pixels is directly equal to the number of samples, that means that number sample is directly equal to the number of sensors on CCD array.

Oversampling.

In the beginning we have define that sampling is further categorize into two types. Which is up sampling and down sampling. Up sampling is also called as over sampling.

The oversampling has a very deep application in image processing which is known as Zooming.

Zooming

We will formally introduce zooming in the upcoming tutorial , but for now on , we will just briefly explain zooming.

Zooming refers to increase the quantity of pixels , so that when you zoom an image , you will see more detail.

The increase in the quantity of pixels is done through oversampling. The one way to zoom is , or to increase samples, is to zoom optically , through the motor movement of the lens and then capture the image. But we have to do it , once the image has been captured.

There is a difference between zooming and sampling.

The concept is same , which is, to increase samples. But the key difference is that while sampling is done on the signals , zooming is done on the digital image.

Pixel Resolution

Before we define pixel resolution, it is necessary to define a pixel.

Pixel

We have already defined a pixel in our tutorial of concept of pixel, in which we define a pixel as the smallest element of an image. We also defined that a pixel can store a value proportional to the light intensity at that particular location.

Now since we have defined a pixel, we are going to define what is resolution.

Resolution

The resolution can be defined in many ways. Such as pixel resolution , spatial resolution , temporal resolution , spectral resolution. Out of which we are going to discuss pixel resolution.

You have probably seen that in your own computer settings , you have monitor resolution of 800 x 600 , 640 x 480 e.t.c

In pixel resolution , the term resolution refers to the total number of count of pixels in an digital image. For example. If an image has M rows and N columns , then its resolution can be defined as M X N.

If we define resolution as the total number of pixels , then pixel resolution can be defined with set of two numbers. The first number the width of the picture , or the pixels across columns , and the second number is height of the picture , or the pixels across its width.

We can say that the higher is the pixel resolution , the higher is the quality of the image.

We can define pixel resolution of an image as 4500 X 5500.

Megapixels

We can calculate mega pixels of a camera using pixel resolution.

Column pixels (width ) X row pixels ( height ) / 1 Million.

The size of an image can be defined by its pixel resolution.

Size = pixel resolution X bpp ( bits per pixel )

Calculating the mega pixels of the camera

Lets say we have an image of dimension: 2500 X 3192.

Its pixel resolution = 2500 * 3192 = 7982350 bytes.

Dividing it by 1 million = 7.9 = 8 mega pixel (approximately).

Aspect ratio

Another important concept with the pixel resolution is aspect ratio.

Aspect ratio is the ratio between width of an image and the height of an image. It is commonly explained as two numbers separated by a colon (8:9). This ratio differs in different images , and in different screens. The common aspect ratios are:

1.33:1, 1.37:1, 1.43:1, 1.50:1, 1.56:1, 1.66:1, 1.75:1, 1.78:1, 1.85:1, 2.00:1, e.t.c

Advantage:

Aspect ratio maintains a balance between the appearance of an image on the screen , means it maintains a ratio between horizontal and vertical pixels. It does not let the image to get distorted when aspect ratio is increased.

For example:

This is a sample image , which has 100 rows and 100 columns. If we wish to make is smaller, and the condition is that the quality remains the same or in other way the image does not get distorted , here how it happens.

Original image:

Changing the rows and columns by maintain the aspect ratio in MS Paint.

Result

Smaller image , but with same balance.

You have probably seen aspect ratios in the video players, where you can adjust the video according to your screen resolution.

Finding the dimensions of the image from aspect ratio:

Aspect ratio tells us many things. With the aspect ratio, you can calculate the dimensions of the image along with the size of the image.

For example

If you are given an image with aspect ratio of 6:2 of an image of pixel resolution of 480000 pixels given the image is an gray scale image.

And you are asked to calculate two things.

Solution:

Given:

Aspect ratio: c:r = 6:2

Pixel resolution: c * r = 480000

Bits per pixel: grayscale image = 8bpp

Find:

Number of rows = ?

Number of cols = ?

Solving first part:

Solving 2nd part:

Size = rows * cols * bpp

Size of image in bits = 400 * 1200 * 8 = 3840000 bits

Size of image in bytes = 480000 bytes

Size of image in kilo bytes = 48 kb (approx).

Concept of Zooming

In this tutorial we are going to introduce the concept of zooming , and the common techniques that are used to zoom an image.

Zooming

Zooming simply means enlarging a picture in a sense that the details in the image became more visible and clear. Zooming an image has many wide applications ranging from zooming through a camera lens , to zoom an image on internet e.t.c.

For example

is zoomed into

You can zoom something at two different steps.

The first step includes zooming before taking an particular image. This is known as pre processing zoom. This zoom involves hardware and mechanical movement.

The second step is to zoom once an image has been captured. It is done through many different algorithms in which we manipulate pixels to zoom in the required portion.

We will discuss them in detail in the next tutorial.

Optical Zoom vs digital Zoom

These two types of zoom are supported by the cameras.

Optical Zoom:

The optical zoom is achieved using the movement of the lens of your camera. An optical zoom is actually a true zoom. The result of the optical zoom is far better then that of digital zoom. In optical zoom , an image is magnified by the lens in such a way that the objects in the image appear to be closer to the camera. In optical zoom the lens is physically extend to zoom or magnify an object.

Digital Zoom:

Digital zoom is basically image processing within a camera. During a digital zoom , the center of the image is magnified and the edges of the picture got crop out. Due to magnified center , it looks like that the object is closer to you.

During a digital zoom , the pixels got expand , due to which the quality of the image is compromised.

The same effect of digital zoom can be seen after the image is taken through your computer by using an image processing toolbox / software, such as Photoshop.

The following picture is the result of digital zoom done through one of the following methods given below in the zooming methods.

Now since we are leaning digital image processing , we will not focus , on how an image can be zoomed optically using lens or other stuff. Rather we will focus on the methods, that enable to zoom a digital image.

Zooming methods:

Although there are many methods that does this job , but we are going to discuss the most common of them here.

They are listed below.

All these three methods are formally introduced in the next tutorial.

Zooming Methods

In this tutorial we are going to formally introduce three methods of zooming that were introduced in the tutorial of Introduction to zooming.

Methods

Each of the methods have their own advantages and disadvantages. We will start by discussing pixel replication.

Method 1: Pixel replication:

Introduction:

It is also known as Nearest neighbor interpolation. As its name suggest , in this method , we just replicate the neighboring pixels. As we have already discussed in the tutorial of Sampling , that zooming is nothing but increase amount of sample or pixels. This algorithm works on the same principle.

Working:

In this method we create new pixels form the already given pixels. Each pixel is replicated in this method n times row wise and column wise and you got a zoomed image. Its as simple as that.

For example:

if you have an image of 2 rows and 2 columns and you want to zoom it twice or 2 times using pixel replication, here how it can be done.

For a better understanding , the image has been taken in the form of matrix with the pixel values of the image.

The above image has two rows and two columns, we will first zoom it row wise.

Row wise zooming:

When we zoom it row wise , we will just simple copy the rows pixels to its adjacent new cell.

Here how it would be done.

As you can that in the above matrix , each pixel is replicated twice in the rows.

Column size zooming:

The next step is to replicate each of the pixel column wise, that we will simply copy the column pixel to its adjacent new column or simply below it.

Here how it would be done.

New image size:

As it can be seen from the above example , that an original image of 2 rows and 2 columns has been converted into 4 rows and 4 columns after zooming. That means the new image has a dimensions of

(Original image rows * zooming factor, Original Image cols * zooming factor)

Advantage and disadvantage:

One of the advantage of this zooming technique is , it is very simple. You just have to copy the pixels and nothing else.

The disadvantage of this technique is that image got zoomed but the output is very blurry. And as the zooming factor increased , the image got more and more blurred. That would eventually result in fully blurred image.

Method 2: Zero order hold

Introduction

Zero order hold method is another method of zooming. It is also known as zoom twice. Because it can only zoom twice. We will see in the below example that why it does that.

Working

In zero order hold method , we pick two adjacent elements from the rows respectively and then we add them and divide the result by two, and place their result in between those two elements. We first do this row wise and then we do this column wise.

For example

Lets take an image of the dimensions of 2 rows and 2 columns and zoom it twice using zero order hold.

First we will zoom it row wise and then column wise.

Row wise zooming

As we take the first two numbers : (2 + 1) = 3 and then we divide it by 2, we get 1.5 which is approximated to 1. The same method is applied in the row 2.

Column wise zooming

We take two adjacent column pixel values which are 1 and 3. We add them and got 4. 4 is then divided by 2 and we get 2 which is placed in between them. The same method is applied in all the columns.

New image size

As you can see that the dimensions of the new image are 3 x 3 where the original image dimensions are 2 x 2. So it means that the dimensions of the new image are based on the following formula

(2(number of rows) minus 1) X (2(number of columns) minus 1)

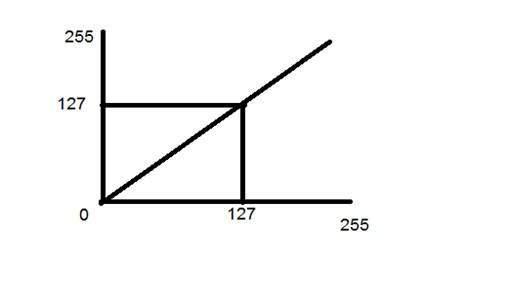

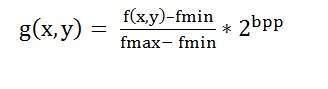

Advantages and disadvantage.